SanDisk Ultra II (240GB) SSD Review

by Kristian Vättö on September 16, 2014 2:00 PM EST

Two years ago Samsung dropped the bomb by releasing the first TLC NAND based SSD. I still vividly remember Anand's reaction when I told him about the SSD 840. I was in Korea for the launch event and was sitting in a press room where Samsung held the announcement. Samsung had only sampled us and everyone else with the 840 Pro, so the 840 and its guts had remained as a mystery. As soon as Samsung lifted the curtain on the 840 specs, I shot Anand a message telling him that it was TLC NAND based. The reason why I still remember this so clearly is because I had to tell Anand three times that, "Yes, I am absolutely sure and am not kidding" before he took my word.

For nearly two years Samsung was the only manufacturer with an SSD that utilized TLC NAND. Most of the other manufacturers have talked about TLC SSDs in one way or another, but nobody has come up with anything retail worthy... until now. A month ago SanDisk took the stage and unveiled their Ultra II, the company's first TLC SSD and the first TLC SSD that is not by Samsung. Obviously, there's a lot to discuss, but let's start with a quick overview of TLC and the market landscape.

There are a variety of reasons for Samsung's head start in the TLC game. Samsung is the only client SSD manufacturer with a fully integrated business model: everything inside Samsung SSDs is designed and manufactured by Samsung. That is unique in the industry because even though the likes of SanDisk and Micron/Crucial manufacture NAND and develop their own custom firmware, they rely on Marvell's controllers for their client drives. Third party silicon always creates some limitations because it is designed based on the needs of several customers, whereas in-house silicon can be designed for a specific application and firmware architecture.

Furthermore, Samsung is the only NAND manufacturer in addition to SK Hynix that is not in a NAND joint-venture. Without a partner Samsung has the full freedom to dedicate as much (or as little) resources and fab space to TLC development and production as necessary, while SanDisk must coordinate with Toshiba to ensure that both companies are satisfied with the development and production strategy.

From what I have heard, the two major problems with TLC have been late production ramp up and low volume. In other words, it has taken two or three additional quarters for TLC NAND to become mature enough for SSDs compared to MLC NAND at the same node, which means that a new MLC node is already sampling and will be available for SSD use within a couple of quarters. It has simply made sense to wait for the smaller and more cost efficent MLC node to become available instead of focusing development resources on a TLC SSD that would become obsolete in a matter of months.

SanDisk and Toshiba seem to have revised their strategy, though, because their second generation 19nm TLC is already SSD-grade and the production of both MLC and TLC flavors of the 15nm node are ramping up as we speak. Maybe TLC is finally becoming a first class citizen in the fab world. Today's review will help tell us more about the state of TLC NAND outside of Samsung's world. I am not going to cover the technical aspects of TLC here because we have done that several times before, so take a look at the links in case you need a refresh on how TLC works and how it differs from SLC/MLC.

The Ultra II is available in four capacities: 120GB, 240GB, 480GB and 960GB. The 120GB and 240GB models are shipping already, but the larger 480GB and 960GB models will be available in about a month. All come in a 2.5" 7mm form factor with a 9.5mm spacer included. There are no mSATA or M.2 models available and from what I was told there are not any in the pipeline either (at least for retail). SanDisk has always been rather conservative with their retail lineup and they have not been interested in the small niches that mSATA and M.2 currently offer, so it is logical decision to stick with 2.5" for now.

| SanDisk Ultra II Specifications | |||||

| Capacity | 120GB | 240GB | 480GB | 960GB | |

| Controller | Marvell 88SS9190 | Marvell 88SS9189 | |||

| NAND | SanDisk 2nd Gen 128Gbit 19nm TLC | ||||

| Sequential Read | 550MB/s | 550MB/s | 550MB/s | 550MB/s | |

| Sequential Write | 500MB/s | 500MB/s | 500MB/s | 500MB/s | |

| 4KB Random Read | 81K IOPS | 91K IOPS | 98K IOPS | 99K IOPS | |

| 4KB Random Write | 80K IOPS | 83K IOPS | 83K IOPS | 83K IOPS | |

| Idle Power (Slumber) | 75mW | 75mW | 85mW | 85mW | |

| Max Power (Read/Write) | 2.5W / 3.3W | 2.7W / 4.5W | 2.7W / 4.5W | 2.9W / 4.6W | |

| Encryption | N/A | ||||

| Warranty | Three years | ||||

| Retail Pricing | $80 | $112 | $200 | $500 | |

There are two different controller configurations: the 120GB and 240GB models are using the 4-channel 9190 "Renoir Lite" controller, whereas the higher capacity models use the full 8-channel design 9189 "Renoir" silicon. To my knowledge there is not any difference besides the channel count (perhaps in internal cache sizes too), and the "Lite" version is cheaper. SanDisk has done this before with the X300s for instance, so having two different controllers is not really anything new and it makes sense because the smaller capacities cannot take full advantage of all eight channels anyway. Note that the 9189/9190 is not the new TLC-optimized 1074 controller from Marvell – it is the same controller that is used in Crucial's MX100 for example.

Similar to the rest of SanDisk's client SSD lineup, the Ultra II does not offer any encryption support. For now SanDisk is only offering encryption in the X300s, but in the future TCG Opal 2.0 and eDrive support will very likely make their way to the client drives as well. DevSleep is not supported either and SanDisk said the reason is that the niche for aftermarket DevSleep-enabled SSDs is practically non-existent. Nearly all platforms that support DevSleep (which is very few, actually) already come with SSDs, e.g. laptops, so DevSleep is not a feature that buyers find valuable.

nCache 2.0

The Ultra II offers rather impressive performance numbers for a TLC drive as even the smallest 120GB model is capable of 550MB/s read and 500MB/s write. The secret behind the performance is the new nCache 2.0, which takes SanDisk's pseudo-SLC caching mode to a next level. While the original nCache was mainly designed for caching the NAND mapping table along with some small writes (<4KB), the 2.0 version caches all writes regardless of their size and type by increasing the capacity of the pseudo-SLC portion. nCache 1.0 had an SLC portion less than 1GB (SanDisk never revealed the actual size), but the 2.0 version runs 5GB of the NAND in SLC mode for every 120GB of user space.

| nCache 2.0 Overview | ||||

| Capacity | 120GB | 240GB | 480GB | 960GB |

| SLC Cache Size | 5GB | 10GB | 20GB | 40GB |

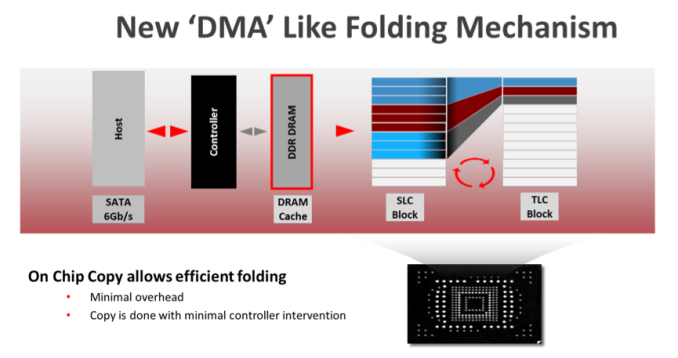

The interesting tidbit about SanDisk's implementation is the fact that each NAND die has a fixed number of blocks that run in SLC mode. The benefit is that when data has to be moved from the SLC to the TLC portion, the transfer can be done internally in the die, which is a feature SanDisk calls On Chip Copy. This is a proprietary design and uses a special die, so you will not see any competitive products using the same architecture. Normally the SLC to TLC transfer would be done like any other wear-leveling operation by using the NAND interface (Toggle or ONFI) and DRAM to move the data around internally from die to die, but the downside is that such a design may interrupt host IO processing since the internal operations occupy the NAND interface and DRAM.

OCZ's "Performance Mode" is a good example of a competing technology: once the fast buffer is full the write speed drops to half because in addition to the host IOs, the drive now has to move the data from SLC to MLC/TLC, which increases overheard since there is additional load on the controller, NAND interfaces, and in the NAND itself. Performance recovers once the copy/reorganize operations are complete.

SanDisk's approach introduces minimal overhead because everything is done within the die. Since an SLC block is exactly one third of a TLC block, three SLC blocks are simply folded into one TLC block. Obviously there is still some additional latency if you are trying to access a page in a block that is in the middle of the folding operation, but the impact of that is far smaller than what a die-to-die transfer would cause.

The On Chip Copy has a predefined threshold that will trigger the folding mechanism, although SanDisk said that it is adaptive in the sense that it will also look at the data type and size to determine the best action. Idle time will also trigger On Chip Copy, but there is no set threshold for that either from what I was told.

In our 240GB sample the SLC cache size is 10GB and since sixteen 128Gbit (16GiB) NAND dies are needed for the raw NAND capacity of 256GiB, the cache per die works out to be 625MB. I am guessing that in reality there is 32GiB of TLC NAND running in SLC mode (i.e. 2GiB per die), which would mean 10.67GiB of SLC, but unfortunately SanDisk could not share the exact block sizes of TLC and MLC with us for competitive reasons.

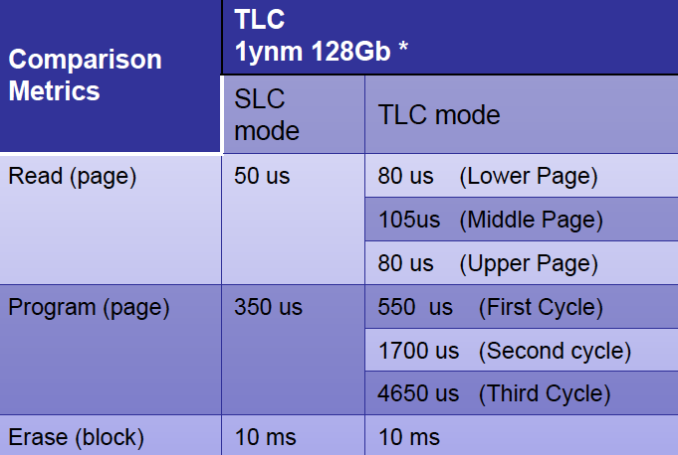

The performance benefits of the SLC mode are obvious. A TLC block requires multiple iterations to be programmed because the distribution of the voltage states is much narrower, so there is less room for errors, which needs a longer and more complex programming process.

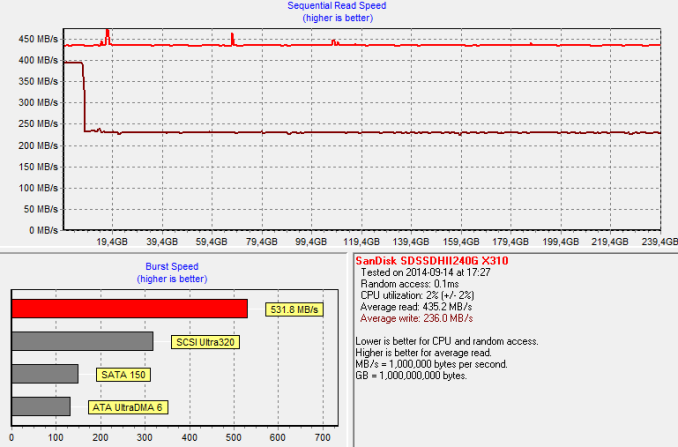

I ran HD Tach to see what the performance is across all LBAs. With sequential data the threshold for On Chip Copy seems to be about 8GB because after that the performance drops from 400MB to ~230MB/s. For average client workloads that is more than enough because users do not usually write more than ~10GB per day and with idle time nCache 2.0 will also move data from SLC to TLC to ensure that the SLC cache has enough space for all incoming writes.

The improved performance is not the only benefit of nCache 2.0. Because everything gets written to the SLC portion first, the data can then be written sequentially to TLC. That minimizes write amplification on the TLC part, which in turn increases endurance because there will be less redundant NAND writes. With sequential writes it is typically possible to achieve write amplification of very close to 1x (i.e. the minimum without compression) and in fact SanDisk claims write amplification of about 0.8x for typical client workloads (for the TLC portion, that is). That is because not all data makes it to the TLC in the first place – some data will be deleted while it is still in the SLC cache and thus will not cause any wear on the TLC. Remember, TLC is generally only good for about 500-1,000 P/E cycles, whereas SLC can easily surpass 30,000 cycles even at 19nm, so utilizing the SLC cache as much as possible is crucial for endurance with TLC at such small lithographies.

Like the previous nCache 1.0, the 2.0 version is also used to cache the NAND mapping table to prevent data corruption and loss. SanDisk does not employ any power loss protection circuitry (i.e. capacitors) in client drives, but instead the SLC cache is used to flush the mapping table from the DRAM more often, which is possible due to the higher endurance and lower latency of SLC. That obviously does not provide the same level of protection as capacitors do because all writes in progress will be lost during a power failure, but it ensures that the NAND mapping table will not become corrupt and turn the drive into a brick. SanDisk actually has an extensive whitepaper on power loss protection and the techniques that are used, so those who are interested in the topic should find it a good read.

Multi Page Recovery (M.P.R)

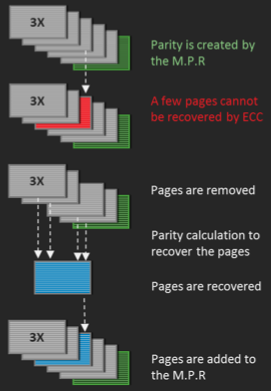

Using parity as a form of error correction has become more and more popular in the industry lately. SandForce made the first move with RAISE several years ago and nearly every manufacturer has released their own implementation since then. However, SanDisk has been one of the few that have not had any proper parity-based error correction in client SSDs, but the Ultra II changes that.

SanDisk's implementation is called Multi Page Recovery and as the name suggests, it provides page-level redundancy. The idea is exactly the same as with SandForce's RAISE, Micron's RAIN, and any other RAID 5-like scheme: parity is created for data that comes in, which can then be used to recover the data in case the ECC engine is not able to do it.

The parity ratio in the Ultra II is 5:1, which means that there is one parity page for every five pages of actual data. But here is the tricky part: with 256GiB of raw NAND and a 5:1 parity ratio, the usable capacity could not be more than 229GB because one sixth of the NAND is dedicated for parity.

The secret is that the NAND dies are not really 128Gbit – they are in fact much larger than that. SanDisk could not give us the exact size due to competitive reasons, but told us that the 128Gbit number should be treated as MLC for it to make sense. Since TLC stores three bits per cell instead of two, it can store 50% more data in the same area, so 128Gbit of MLC would become 192Gbit of TLC. That is in a perfect world where every die is equal and there are no bad blocks; in reality TLC provides about a 30-40% density increase over MLC because TLC inherently has more bad blocks (e.g. stricter voltage requirements because there is less room for errors due to narrower distribution of the voltage states).

In this example, let's assume that TLC provides a 35% increase over TLC when the bad blocks are taken away. That turns our 128Gbit MLC die into a 173Gbit TLC die. Now, with nCache 2.0, every die has about 5Gbit of SLC, which eats away ~15Gbit of TLC and we end up with a die that has 158Gbit of usable capacity. Factor in the 5:1 parity ratio and the final user capacity is ~132Gbit per die. Sixteen of those would equal 264GiB of raw NAND, which is pretty close to the 256GiB we started with.

Note that the above is just an example to help you understand how 5:1 parity ratio is possible. Like I said, SanDisk would not disclose the actual numbers and in the real world the raw NAND capacity may vary a bit because the number of bad blocks will vary from die to die. What matters, though, is that the Ultra II has the same 12.7% over-provisioning as the Extreme Pro, and that is after nCache 2.0 and Multi Page Recovery have been taken into account (i.e. 12.7% is dedicated to garbage collection, wear-leveling and the usual NAND management schemes).

Furthermore, all NAND die have what are called spare bytes, which are additional bytes meant for ECC. For instance Micron's 20nm MLC NAND has an actual page size of 17,600 bytes (16,384 user space + 1,216 spare bytes), so in reality a 128Gbit die is never truly 128Gbit – there is always a bit more for ECC and bad block management. The number of spare bytes has grown as the industry has moved to smaller process nodes because the need for ECC has increases and so has the number of bad blocks. TLC is just one level worse because it is less reliable by its design, hence more spare bytes are needed to make it usable in SSDs.

Testing Endurance

SanDisk does not provide any specific endurance rating for the Ultra II, which is similar to what Samsung is doing with the SSD 840 EVO. The reason is that both are only validated for client usage, meaning that if you were to employ either of them in an enterprise environment, the warranty would be void anyway. I can see the reasoning behind not including a strict endurance rating for an entry-level client drive because consumers are not very good at understanding their endurance needs and having a rating (which would obviously be lower for a TLC drive) would just lead to confusion. However, the fact that SanDisk has not set any rating does not mean that I am not going to test endurance.

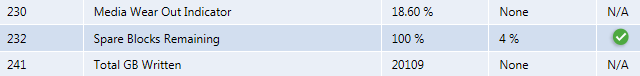

To do it, I turned to our standard endurance testing methodology. Basically, I wrote sequential 128KB data (QD1) to the drive and monitored the SMART values 230 and 241, i.e. Media Wear Out Indicator (MWI) and Total GB Written. In the case of the Ultra II, the MWI works opposite of what we are used to: it starts from 0% and increases as the drive wears out. When the MWI reaches 100%, the drive has come to the end of its rated lifespan – it will likely continue to work because client SSD endurance ratings are with one-year data retention, but I would not recommend using it for any crititical data after that point.

| SanDisk Ultra II Endurance Test | |

| Change in Media Wear Out Indicator | 7.8% |

| Change in Total GB Written | 9,232GiB |

| Observed Total Endurance | 118,359GiB |

| Observed P/E Cycles | ~530 |

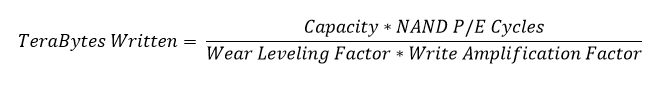

The table above summarizes the results of my test. The duration of the test was 12 hours and I took a few data points during the run to ensure that the results are valid. From the data, I extrapolated the total endurance and used it as the basis to calculate the P/E cycles with the following formula:

I made the assumption that the combined Wear Leveling and Write Amplification factor is 1x because that is plausible with pure sequential writes and it makes the calculation much simpler. For capacity I used the user capacity (240GB i.e. 223.5GiB), so the observed P/E cycles is simply total endurance divided by the capacity (both in GiB).

The number I came up with is 530 P/E cycles. There are a number of factors factors that make it practically impossible to figure out the exact NAND endurance because a part of the NAND operates in SLC mode with far greater endurance and there is a hefty amount of spare bytes for parity, but I think it is safe to say that the TLC NAND portion is rated at around 500 P/E cycles.

| SanDisk Ultra II Estimated Endurance | ||||

| Capacity | 120GB | 240GB | 480GB | 960GB |

| Total Estimated Endurance | 54.6TiB | 109.1TiB | 218.3TiB | 436.6TiB |

| Writes per Day | 20GiB | |||

| Write Amplification | 1.2x | |||

| Total Estimated Lifespan | 6.4 years | 12.8 years | 25.5 years | 51.0 years |

Because the P/E cycle count alone is easy to misunderstand, I put it into context that is easier to understand i.e. lifespan of the drive. All I did was multiply the user capacity by the P/E cycle count to get the total endurance, which I then used to calculate the estimated lifespan. I selected 20GiB of writes per day because even though SanDisk did not provide an endurance rating for the Ultra II, their internal design goal was 20GiB per day, which is a fairly common standard for client drives. I set the write amplification to 1.2x as the TLC blocks are written sequentially thanks to nCache 2.0, and that should result in write amplification that is very close to 1x.

500 P/E cycles certainly does not sound much, but when you put it into context it is more than enough. At 20GiB a day, even the 120GB Ultra II will easily outlive the rest of the components. nCache 2.0 plays a huge role in making the Ultra II as durable as it is because it keeps the write amplification close to the ideal 1x. Without nCache 2.0, 500 P/E cycles would be a major problem, but as it stands I do not see endurance being an issue. Of course, if you write more than 20GiB per day and your workload is IO intensive in general, it is better to look for drives that are meant for heavier usage, such as the Extreme Pro and SSD 850 Pro.

A look at SanDisk's Updated SSD Dashboard

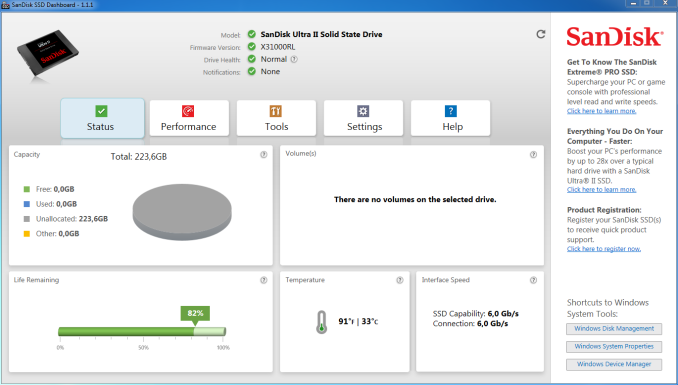

Along with the Ultra II, SanDisk is bringing an updated version of its SSD Dashboard, labeled as 1.1.1.

The start view has not changed and provides the same overview of the drive as before.

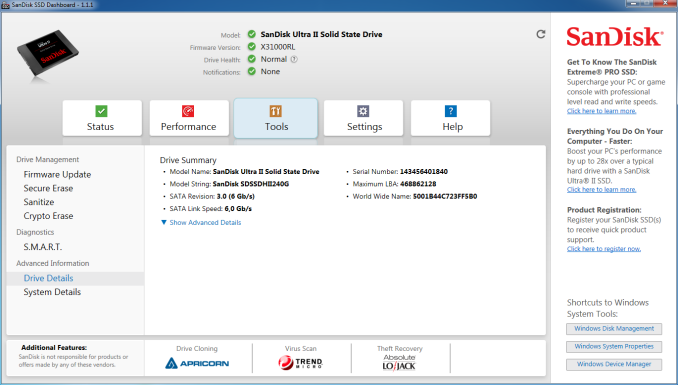

The most important new features are under the "Tools" tab and are provided by third parties. In the past SanDisk has not offered any cloning software with their drives, but the new version brings the option to use Apricorn's EZ GIG IV software to migrate from an old drive. Clicking the link in the Dashboard will lead to Apricorn's website where the user can download the software. Note that the Dashboard includes a special version of EZ GIG IV that can only be used to clone a drive once.

In addition to EZ GIG IV, the 1.1.1 version adds Trend Micro's Titanium Antivirus+ software. While most people are already running antivirus software of some sort, there are (too) many people who do not necessarily have an up-to-date antivirus software that has the definitions for the latest malware, so the idea behind including the antivirus software is to ensure that all users have a free and easy way to check their system for malware before migrating to a new drive.

The new version also adds support for "sanitation". It is basically an enhanced version of secure erase and works the same way as 0-fill erase does: instead of just erasing the data, sanitation writes zeros to all LBAs to guarantee that there is absolutely no way to recover the old data. Crypto erase is now a part of the Dashboard too, although currently that is only supported by the X300s since it is the only drive with hardware encryption support.

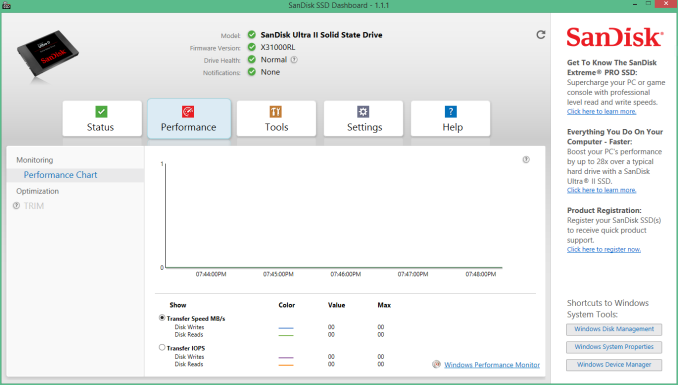

Live performance monitoring is also supported, but unfortunately there is no option to run a benchmark within the software (i.e. it just monitors the drive similar to what Windows Performance Monitor does). For OSes without TRIM, the Dashboard includes an option to run a scheduled TRIM to ensure maximum performance. If TRIM is support and enabled (like in this case since I was running Windows 8.1), the TRIM tab is grayed out since the OS will take care of sending the TRIM commands when necessary.

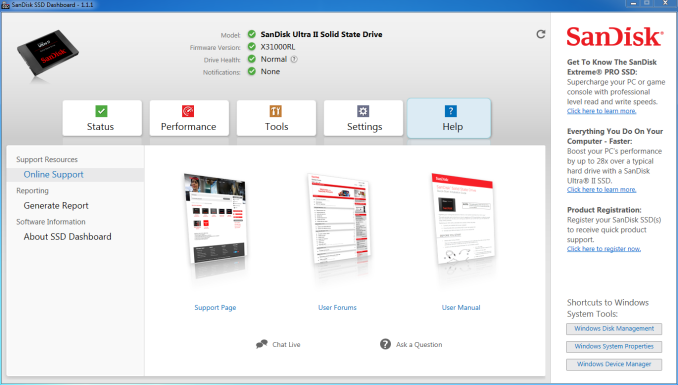

Support has also been improved in the new version as options for live chat and email support have been added. New languages have been added, too, and the Dashboard is now available in 17 different languages. During the install process, the installer will ask for the preferred language, but it is also possible to change that afterwards under the Settings tab.

Test Systems

For AnandTech Storage Benches, performance consistency, random and sequential performance, performance vs transfer size and load power consumption we use the following system:

| CPU | Intel Core i5-2500K running at 3.3GHz (Turbo & EIST enabled) |

| Motherboard | AsRock Z68 Pro3 |

| Chipset | Intel Z68 |

| Chipset Drivers | Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory | G.Skill RipjawsX DDR3-1600 4 x 8GB (9-9-9-24) |

| Video Card | Palit GeForce GTX 770 JetStream 2GB GDDR5 (1150MHz core clock; 3505MHz GDDR5 effective) |

| Video Drivers | NVIDIA GeForce 332.21 WHQL |

| Desktop Resolution | 1920 x 1080 |

| OS | Windows 7 x64 |

Thanks to G.Skill for the RipjawsX 32GB DDR3 DRAM kit

For slumber power testing we used a different system:

| CPU | Intel Core i7-4770K running at 3.3GHz (Turbo & EIST enabled, C-states disabled) |

| Motherboard | ASUS Z87 Deluxe (BIOS 1707) |

| Chipset | Intel Z87 |

| Chipset Drivers | Intel 9.4.0.1026 + Intel RST 12.9 |

| Memory | Corsair Vengeance DDR3-1866 2x8GB (9-10-9-27 2T) |

| Graphics | Intel HD Graphics 4600 |

| Graphics Drivers | 15.33.8.64.3345 |

| Desktop Resolution | 1920 x 1080 |

| OS | Windows 7 x64 |

- Thanks to Intel for the Core i7-4770K CPU

- Thanks to ASUS for the Z87 Deluxe motherboard

- Thanks to Corsair for the Vengeance 16GB DDR3-1866 DRAM kit, RM750 power supply, Hydro H60 CPU cooler and Carbide 330R case

54 Comments

View All Comments

maecenas - Tuesday, September 16, 2014 - link

Interesting stuff, good to see that competition is picking up in this market. I think it will be a significant threshold moment when we see the 240gb SSDs drop below $100.NeatOman - Tuesday, September 16, 2014 - link

I just saw an OCZ Vertex 460 240GB drive go for $104 the other day on newegg, and of course there sold out. Thats Nucking Futs, since i bought a 120GB 840 pro last year for $140 and the speed looks to be about the same, possibly faster in some ways on the OCZD. Lister - Sunday, October 12, 2014 - link

But then, it is OCZ, and many people who know their tech history, wouldn't take an OCZ SSD for free (regardless of whether they are right or wrong in doing so).simonrichter - Friday, October 3, 2014 - link

I agree, really interesting to see and I'm looking forward to see what the future holds for SSDs. /Simon from http://www.consumertop.com/best-computer-storage-g...CamdogXIII - Tuesday, September 16, 2014 - link

I bought my first SSD back in 08. 16GB for 80$ Second SSD was 32GB for 100$. Third SSD was 64GB for 100$. Just bought a 256GB 840 Pro for 160$. We have come a long way.PICman - Tuesday, September 16, 2014 - link

Having an SLC cache is clever. I also like the low power consumption, reasonable performance, and especially the low price. I've had bad luck with the reliability of Samsung products, so it's great that they are getting some competition.Wixman666 - Wednesday, September 17, 2014 - link

I've had nothing but stellar performance from Samsung SSDs. I have dozens of them out in the field, and not one single failure. Sandisk is OK as well... OCZ might end up OK since Toshiba owns them now.Essence_of_War - Tuesday, September 16, 2014 - link

I feel like with every new ssd review I read, I grow to appreciate the fantastic value that the MX100 represents even more.frontlinegeek - Saturday, September 20, 2014 - link

Totally agree. We just outfitted our development PCs at work with MX100 256 GB drives and they are utterly fantastic for price/performance. I cannot at all get over how big an impact an SSD at work makes. FAR more than at home I can say. At least for average home use.We run multiple Visual Studio sessions and Oracle SQL Developer along with browsers and other misc apps so the impact has been just terrific.

fanofanand - Thursday, May 12, 2016 - link

I know this article is old, but I was researching SSD's and as I trust Anandtech over any other tech site, I came here for the truth. The sad reality is, the MX100 has been overly praised, and is now priced (on Amazon) $72 more than the Sandisk Ultra II at same/similar (512 vs 480) capacity. The MX100 isn't Ultra 2 good......