FMS 2014: SanDisk ULLtraDIMM to Ship in Supermicro's Servers

by Kristian Vättö on August 18, 2014 7:00 AM EST

We are running a bit late with our Flash Memory Summit coverage as I did not get back from the US until last Friday, but I still wanted to cover the most interesting tidbits of the show. ULLtraDIMM (Ultra Low Latency DIMM) was initially launched by SMART Storage a year ago but SanDisk acquired the company shortly after, which made ULLtraDIMM a part of SanDisk's product portfolio.

The ULLtraDIMM was developed in partnership with Diablo Technologies and it is an enterprise SSD that connects to the DDR3 interface instead of the traditional SATA/SAS and PCIe interfaces. IBM was the first to partner with the two to ship the ULLtraDIMM in servers, but at this year's show SanDisk announced that Supermicro will be joining as the second partner to use ULLtraDIMM SSDs. More specifically Supermicro will be shipping ULLtraDIMM in its Green SuperServer and SuperStorage platforms and availability is scheduled for Q4 this year.

| SanDisk ULLtraDIMM Specifications | |

| Capacities | 200GB & 400GB |

| Controller | 2x Marvell 88SS9187 |

| NAND | SanDisk 19nm MLC |

| Sequential Read | 1,000MB/s |

| Sequential Write | 760MB/s |

| 4KB Random Read | 150K IOPS |

| 4KB Random Write | 65K IOPS |

| Read Latency | 150 µsec |

| Write Latency | < 5 µsec |

| Endurance | 10/25 DWPD (random/sequential) |

| Warranty | Five years |

We have not covered the ULLtraDIMM before, so I figured I would provide a quick overview of the product as well. Hardware wise the ULLtraDIMM consists of two Marvell 88SS9187 SATA 6Gbps controllers, which are configured in an array using a custom chip with a Diablo Technologies label, which I presume is also the secret behind DDR3 compatibility. ULLtraDIMM supports F.R.A.M.E. (Flexible Redundant Array of Memory Elements) that utilizes parity to protect against page/block/die level failures, which is SanDisk's answer to SandForce's RAISE and Micron's RAIN. Power loss protection is supported as well and is provided by an array of capacitors.

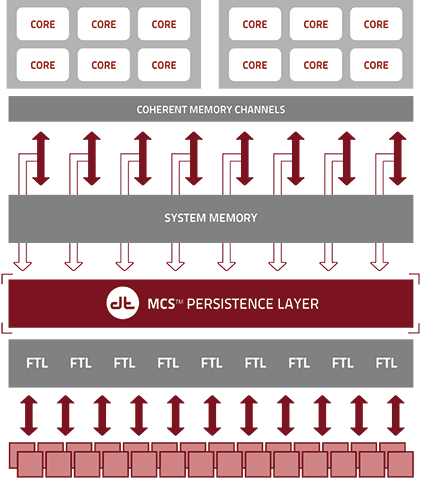

The benefit of using a DDR3 interface instead of SATA/SAS or PCIe is lower latency because the SSDs sit closer to the CPU. The memory interface has also been designed with parallelism in mind and can thus take greater advantage of multiple drives without sacrificing performance or latency. SanDisk claims write latency of less then five microseconds, which is lower than what even PCIe SSDs offer (e.g. Intel SSD DC P3700 is rated at 20µs).

Unfortunately there are no third party benchmarks for the ULLtraDIMM (update: there actually are benchmarks) so it is hard to say how it really stacks up against PCIe SSDs, but the concept is definitely intriguing. In the end, NAND flash is memory and putting it on the DDR3 interface is logical, even though NAND is not as fast as DRAM. NVMe is designed to make PCIe more flash friendly but there are still some intensive workloads that should benefit from the lower latency of the DDR3 interface. Hopefully we will be able to get a review sample soon, so we can put ULLtraDIMM through our own tests and see how it really compares with the competition.

30 Comments

View All Comments

esoel_ - Monday, August 18, 2014 - link

http://www.mysqlperformanceblog.com/2014/08/12/ben...Here are some benchmarks on the IBM ones.

Kristian Vättö - Monday, August 18, 2014 - link

Awesome, thank you. I updated the article with a link to the benchmarks.nevertell - Monday, August 18, 2014 - link

This type of technology could definitely help with disk caching on consumer products. Whilst going full flash can be great, if this technology get's cheaper or as cheap as traditional 200 gigabyte drives, having a terabyte hard drive with this to augment it would be ideal.Mobile-Dom - Monday, August 18, 2014 - link

So these are SSDs that fit in RAM slots?mmrezaie - Monday, August 18, 2014 - link

Does it need special drivers? how os sees the ssd?Kristian Vättö - Monday, August 18, 2014 - link

Yes, it requires a kernel driver. In Linux the driver bypasses SCSI/SATA, whereas in Windows and VMware it emulates SCSI to appear as a storage volume. See this presentation for more details about the operation:http://www.diablo-technologies.com/wp-content/uplo...

mmrezaie - Monday, August 18, 2014 - link

thanks. It is still confusing for me. If memory bus is available like that what takes other to not host their co-processors in there? like for example having couple of very low-latency TPMs for encryption or some ASIC for computation? Is it something wrong with PCI-E bus for very low-latency IO?mmrezaie - Monday, August 18, 2014 - link

This can solve the latency problem we have in ASICs for computation? We can have HUMA like interface and low latency/parallel interface to memory (which typically has been implemented better by Intel) of the device.Kristian Vättö - Monday, August 18, 2014 - link

I think the problem is that ASICs tend to generate more heat and be larger in physical size, which don't make them the prime candidate for DIMM form factor since the DIMMs need to be passively cooled.DanNeely - Monday, August 18, 2014 - link

A subset of overclockers ram has offered a fan module (typically 3x40mm or 2x60mm) that clips on top of the ram bank and plugs into a mobo fan header; so cooling should be doable. I suspect the dimm socket is probably limited to only providing enough power for dram and couldn't feed a power hungry ASIC. I suppose you could kludge that with a molex connector on the top edge; but I'd be concerned about durability for that layout.