Khronos @ SIGGRAPH 2013: OpenGL 4.4, OpenCL 2.0, & OpenCL 1.2 SPIR Announced

by Ryan Smith on July 22, 2013 9:00 AM EST

Kicking off this week is the annual SIGGRAPH conference, the graphics industry’s yearly professional event. Outside of the individual vendor events and individual technologies we cover throughout the year, SIGGRAPH is typically the major venue for new technology and standards announcements. And though it isn’t really a gaming conference – this is a show dedicated to professional software and hardware – a number of those announcements do end up being gaming related, if only tangentially. As a result SIGGRAPH offers something for everyone in the graphics/GPU trident, gaming, compute, and professional rendering alike.

Most years the first major announcement to hit the wire comes from the Khronos Group, and this year is no different. The Khronos Group is of course the industry consortium responsible for OpenGL, OpenCL, WebGL, and other open graphics/multimedia standards and APIs, so their announcements carry a great deal of importance for the industry. Khronos membership in turn is a who’s who of technology, and includes virtually every major GPU vendor, both desktop and mobile.

OpenGL 4.4 Specification Released

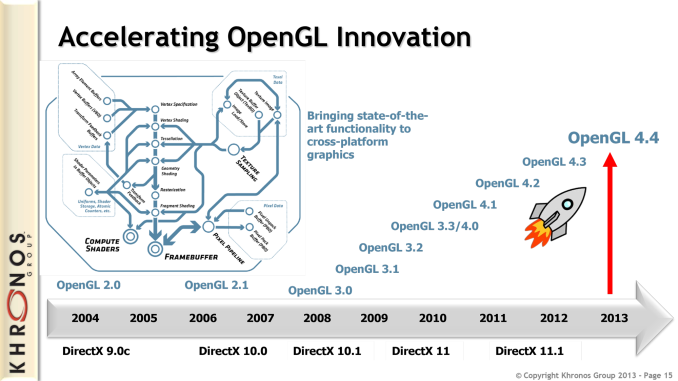

Khronos’s first announcement for SIGGRAPH 2013 is that the OpenGL 4.4 specification has been ratified and released. This being the 5th edition of OpenGL 4.x, Khronos has continued iterating on OpenGL in concert with their pipelined development process. OpenGL 4.4 follows up on OpenGL 4.3, which last year broke significant ground for OpenGL by introducing compute shaders, ASTC texture compression, and other new functionality for the API.

This year Khronos isn’t making such sweeping changes to OpenGL, but they are adding several new low-level features that should catch the eyes of developers. Most of these are admittedly so low level that it would be difficult for anyone but developers to appreciate, but there are a few items we wanted to go over for their importance and for wider reflection of the state of OpenGL.

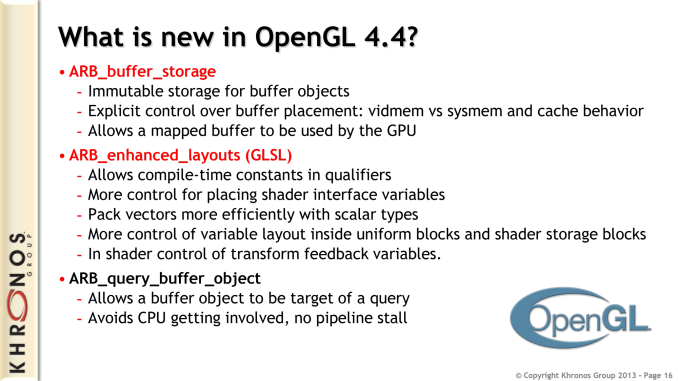

The biggest feature hitting the OpenGL core specification in 4.4 is buffer storage (ARB_buffer_storage). Buffer storage is directly targeted at APUs, SoCs, and other GPU/CPU integrated devices where the two processors share memory pools, address space, and other resources. Buffer storage at its most basic level allows developers to control where memory buffer objects are stored in these unified devices, giving developers the ability to specify whether buffers are stored in video memory or system memory, and how those buffers are to be cached. The buffer storage mechanism in turn also formally allows GPUs to access those buffers not being stored locally, giving GPUs a degree of visibility into the contents of system memory where it’s necessary. Like most Khronos additions this is a forward looking feature, with a clear outlook towards what can be done with HSA and HSA-like products that are due to be launching soon.

Khronos’s other major addition with OpenGL 4.4 is enhanced layouts for the OpenGL Shader Language (ARB_enhanced_layouts). The name on this is somewhat self-explanatory in this case, with enhanced layouts dealing with ways to optimize the layout of data in shader programs for greater efficiency. This includes new ways of packing scalar datatypes alongside vectors, and giving developers more control of variable layout inside uniform and storage blocks. Support for constant variables in qualifiers at compile-time is also added through this extension.

Moving on from the OpenGL core, in keeping with the OpenGL development pipeline several new features are being added as official ARB extensions, being promoted (and modified/unified as necessary) from vendor specific extensions. Chief among these new ARB extensions are extensions to support sparse textures (ARB_sparse_texture) and bindless textures (ARB_bindless_texture). You may recognize these features from the launch of AMD’s Radeon HD 7000 series and NVIDIA’s GeForce GTX 600 series respectively, as these two extensions are based on the new hardware features those products introduced and are the evolution of their previous forms as vendor specific extensions.

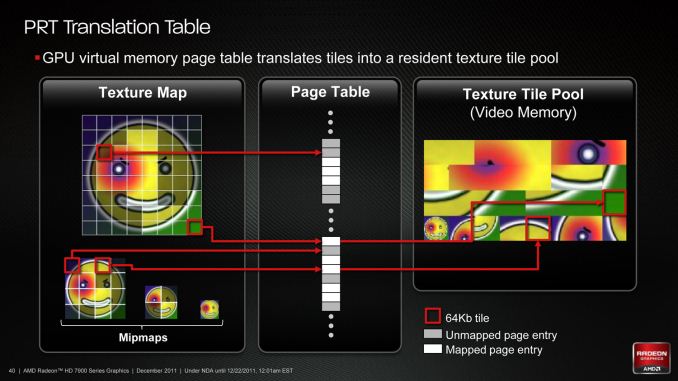

Sparse textures, also known as partially resident textures, give the hardware the ability to only keep tiles/chunks of textures in resident memory, versus having to load (and unload) whole textures. The most practical application of this technology is to enable megatexture-like texture management in hardware, loading only the necessary tiles of the highest resolution textures; however for professional developers this also opens up a new usage scenario by allowing the use of textures larger than the physical memory of a card, allowing for the use of larger textures without restriction by memory constraints.

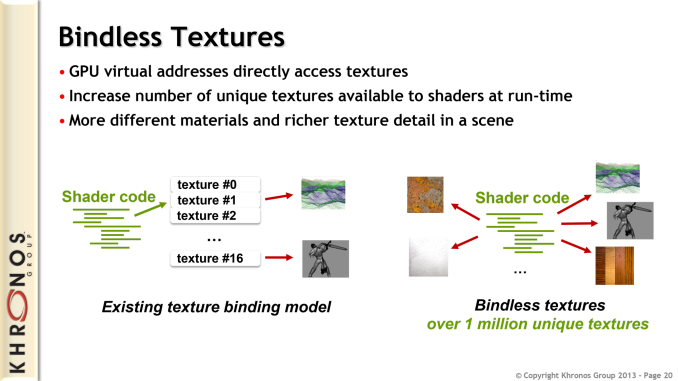

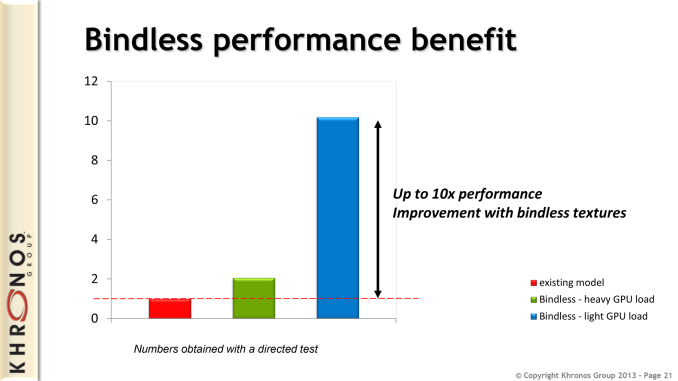

Meanwhile bindless textures functionality does away with the concept of texture “slots” and the limits imposed by the limited number of slots, replacing the fixed size binding table with unlimited redirection through the use of virtual addresses. The primary benefit of this is that it allows the easy addition and use of more textures within a scene (under most DX11 hardware this limit was 128 slots), however there is also a performance angle to this. Since binding and rebinding objects is a task that relies on the CPU, getting rid of binding altogether can improve performance in CPU limited scenarios. Khronos/NVIDIA throws around a 10x best-case number, and while this is certainly the exception rather than the rule it will be interesting to see what the real world benefits are like once applications start coming out utilizing this feature.

Ultimately both of these features, along with several other ARB extensions, are in the middle of their evolution. The ARB extension stage is essentially a half-way house for major features, allowing features to be further refined and analyzed after being standardized by the ARB. The ultimate goal here is for most of these features to graduate from extensions and become part of the core OpenGL standard in future versions, which means if everything goes smoothly we’d expect to see sparse texture support and bindless texture support in the core standard (and the devices that support it) in the not too distant future.

Finally, in a move that should have developers everywhere jumping with joy, OpenGL finally has official and up to date conformance tests. OpenGL has not had an up to date conformance test since the project was led by SGI almost a decade ago, with the task of developing the tests being a continual work in progress for many years. In the interim the lack of conformance testing has been an obstacle for OpenGL, as there wasn’t an official way to validate implementations against known and expected behaviors, leading to more uncertainty and bugs than anyone was comfortable with.

Now with the completion of the new conformance tests, OpenGL implementations can be tested for their conformance, and in turn those implementations will now need to be conformant before they are approved by Khronos. For developers this means they will be writing software against better devices and drivers, and for device makers they will have an official target to chase rather than having to interpret the sometimes ambiguous OpenGL standards.

28 Comments

View All Comments

MikhailT - Monday, July 22, 2013 - link

> This being the 5th revision of OpenGL 4.x,Don't you mean 4th revision or did I miss something? The 4.0 release isn't a revision of 4.x, so that makes 4.4 the 4th revision...

nutgirdle - Monday, July 22, 2013 - link

Ahh, the dreaded "Count from zero" confusion.Ryan Smith - Monday, July 22, 2013 - link

I was indeed counting from zero. I've reworded it to remove the ambiguity.mr_tawan - Monday, July 22, 2013 - link

I think, 4.0 is also a revision of OpenGL.Ortanon - Monday, July 22, 2013 - link

4th revision, or 5th version haha.lmcd - Monday, July 22, 2013 - link

Yes, you are correct.B3an - Monday, July 22, 2013 - link

I've always wanted to see a detailed comparison of the latest OpenGL features versus the latest DirectX features. Including the pro's and con's of OpenGL and DX. I'm pretty sure DX 11.2 is more advanced/overall better but it's hard to tell.Ryan Smith - Monday, July 22, 2013 - link

At a high level the two are roughly equivalent. With compute shaders in OpenGL 4.3 there is no longer a significant feature gap for reimplmenting D3D11 in OpenGL. I didn't cover this in the article since it's getting into the real minutia, but 4.4 further improves on that in part by adding a new vertex packing format (GL_ARB_vertex_type_10f_11f_11f_rev) that is equivalent to one that is in D3D. Buffer storage also replicates something D3D does.przemo_li - Thursday, July 25, 2013 - link

DX is actually a bit behind (though DX11.2 will fix some of this), and OpenGL 4.4 pushed for some non-essential stuff that was missing compared in DX. (For ease of porting, cause same things could be done already).http://rastergrid.com/blog/2011/10/opengl-vs-direc...

(Only for OGL 4.3 vs DX11.1)

WhitneyLand - Monday, July 22, 2013 - link

A vote for an article on the latest in GPU raytracing performance. Even though it's domain specific, it really pushes the limits of what's possible on a GPU and therefore touches on a lot of generally interesting hardware and performance issues. It's just cool stuff. One example is the Octane renderer.