Khronos Group Releases Neural Network Exchange Format 1.0, Showcases First Public OpenXR Demo

by Nate Oh on August 14, 2018 9:00 AM EST- Posted in

- GPUs

- Khronos

- VR

- Machine Learning

- Neural Networks

- Standards

- Deep Learning

- AR

- AI

Today at SIGGRAPH the Khronos Group, the industry consortium behind OpenGL and Vulkan, announced the ratification and public release of their Neural Network Exchange Format (NNEF), now finalized as the official 1.0 specification. Announced in 2016 and launched as a provisional spec in late 2017, NNEF is Khrono's deep learning open format for neural network models, allowing device-agnostic deployment of common neural networks. And on a flashier note, StarVR and Microsoft are providing the first public demonstration of Khronos' OpenXR, a cross-platform API standard for VR/AR hardware and software.

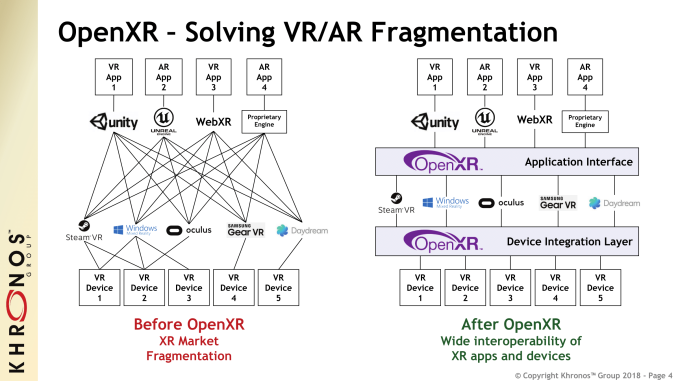

With a two-part approach, OpenXR's goal is VR/AR interoperability encompassing both the application interface layer (e.g. Unity or Unreal) and the device layer (e.g. SteamVR, Samsung GearVR). In terms of SIGGRAPH's showcare, Epic's Showdown demo is being exhibited with StarVR and Windows Mixed Reality headsets (not Hololens) through OpenXR runtimes, via an Unreal Engine 4 plugin. Given the amount of pre-existing proprietary APIs, the original OpenXR iterations were actually developed in-line with them to such an extent that Khronos considered the current OpenXR more like a version 2.0.

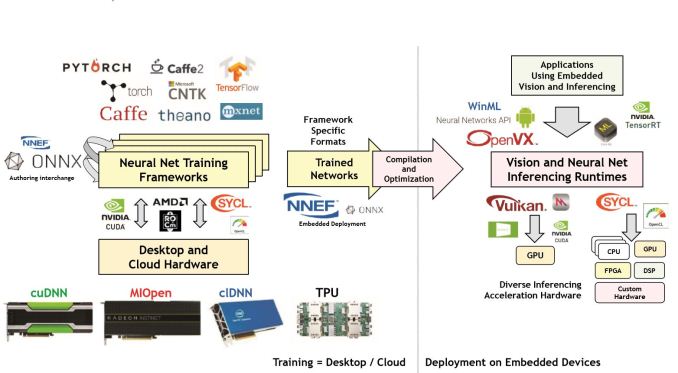

As for NNEF, the key context is one of the side effects of the modern deep learning (DL) boom: the vast array of valid DL frameworks and toolchains. In addition to those frameworks, we've seen that any and all pieces of silicon have been pressed into action as an AI accelerator: GPUs, CPUs, SoCs, FPGAs, ASICs, and even more exotic fare. To recap briefly, after a neural network model is developed and finalized by training on more powerful hardware, it is then deployed for use on typically less powerful edge devices.

For many companies, the amount of incompatible choices makes 'porting' much more difficult between any given training framework and any given inferencing engine, especially as companies implement more and more specialized hardware, datatypes, and weights. With NNEF, the goal is providing an exchange format that allows any given training framework to be deployed onto any given inferencing engine, without sacrificing specialized implementations.

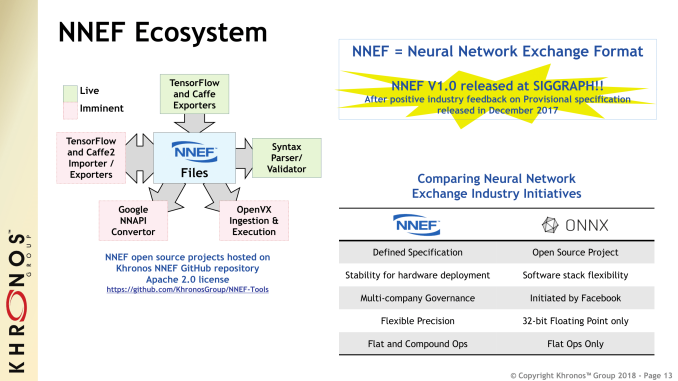

Today's ratification and final release is more of a 'hard launch' with NNEF ecosystem tools now available on GitHub. When NNEF 1.0 was first launched as a provisional specification, the idea was to garner industry feedback, and after those changes NNEF 1.0 has been released as an official standard. In that sense, while both initiatives are open-source, NNEF differs from the similar Open Neural Network Exchange (ONNX) that was started by Facebook and Microsoft, which is organized as a open-source project. And where ONNX might focus on interchange between training formats, NNEF continues to be designed for variable deployment.

Current tools and support for the standard include two open source TensorFlow converters for protobuf and Python network descriptors, as well as a Caffe converter. Khronos also notes tool development efforts from other groups: an open source Caffe2 converter by Au-Zone Technologies (due Q3 2018), various tools by Almotive and AMD, and an Android NN API importer by a team at National Tsing-Hua University of Taiwan. More information on the final NNEF 1.0 spec can be find on its main page, including the full open specification on Khronos' registry.

Also announced was the Khronos Education Forum, spurred by increasing adoption and learning of Vulkan and other Khronos standards/APIs, the former of course not known for a gentle learning curve. One of the more interesting tidbits of this initiative is access to the members of the various Khronos Working Groups, meaning that educators and students will get guidance from the very people who designed a given specification.

8 Comments

View All Comments

Flunk - Tuesday, August 14, 2018 - link

Is VR still a thing? It seems like we've been waiting for a killer app for years.edzieba - Tuesday, August 14, 2018 - link

Why would a 'killer app' be necessary? Smartphones proliferated without a 'killer app' (the iPhone launched without any apps at all) and with only a subset of the features available from the WAN-enabled PDAs that proceeded it.DearEmery - Tuesday, August 14, 2018 - link

There were mobile phones before smartphones. You know those refrigerators with external antenna that you couldn't use because a call required a mortgage? Everyone had one. Then everyone owned a smaller one. Then a thinner one. Smaller. IT HAS SNAKE. Bigger. Color display. Bigger color display. IT HAS INTERNET. Bigger. I CAN TOUCH THE SCREEN. Maddox hates it. Bigger.The "smartphone" was more iterative and the leap far smaller than Desktop/Monitor to Displays on Face. Everyone needed a phone anyway. Nobody needs a display on face. Display on face must prove self with killer app.

Dr. Swag - Tuesday, August 14, 2018 - link

inb4 fortnite vrmode_13h - Tuesday, August 14, 2018 - link

Tech takes time. People forget that.We're only 1 generation of GPUs, 0.5 generations of consoles, and 1.5 generations of HMDs since VR officially launched. It's still expensive, cumbersome, and not even wireless.

Amandtec - Tuesday, August 14, 2018 - link

Smartphones had two killer apps: phoning and email.Sakkura - Tuesday, August 14, 2018 - link

You didn't need a smartphone to make a phone call, and email wasn't that big of a deal on its own.Spunjji - Wednesday, August 15, 2018 - link

You didn't even need a smartphone for email, I was doing that for years before I got a WinCE phone which itself predated the "smartphone" proper by a couple of years.