AMD To Launch New Desktop GPU This Quarter (Q2’15) With HBM

by Ryan Smith on May 6, 2015 2:22 PM EST- Posted in

- GPUs

- AMD

- Radeon

- AMD FAD 2015

Having just left the stage at AMD’s financial analyst day is CEO Dr. Lisa Su, who was on stage to present an update on AMD’s computing and graphic business. As AMD has already previously discussed their technology roadmaps over the next two years earlier in this presentation, we’ll jump right into the new material.

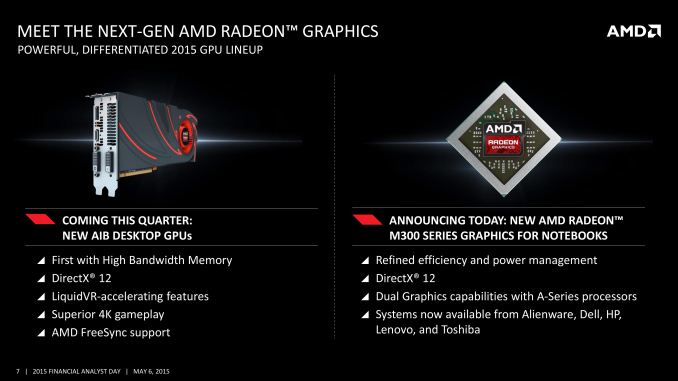

Not mentioned in AMD’s GPU roadmap but now being mentioned by Dr. Su is confirmation that AMD will be launching new desktop GPUs this quarter. AMD is not saying much about these new products quite yet, though based on their description it does sound like we’re looking at high-performance products (and for anyone asking, the picture of the card is a placeholder; AMD doesn’t want to show any pictures of the real product quite yet). These new products will support DirectX 12, though I will caution against confusing that with Feature Level 12_x support until we know more.

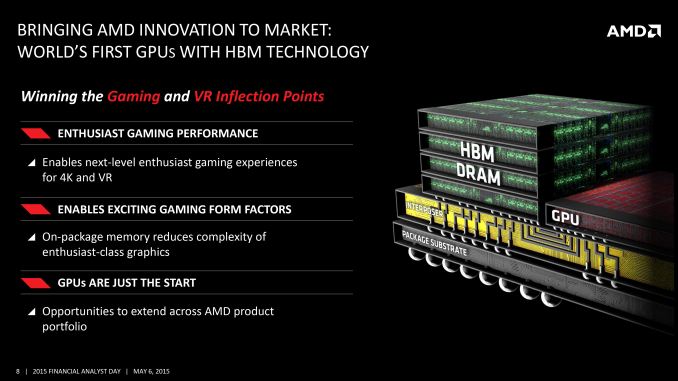

Meanwhile the big news here is that these forthcoming GPUs will be the first AMD GPUs to support High Bandwidth Memory. AMD’s GPU roadmap coyly labels this as a 2016 technology, but in fact it is coming to GPUs in 2015. The advantage of going with HBM at this time is that it will allow AMD to greatly increase their memory bandwidth capabilities while bringing down power consumption. Coupled with the fact that any new GPU from AMD should also include AMD’s latest color compression technology, and the implication is that the effective increase in memory bandwidth should be quite large. For AMD, they see this as being one of the keys of delivering better 4K performance along with better VR performance.

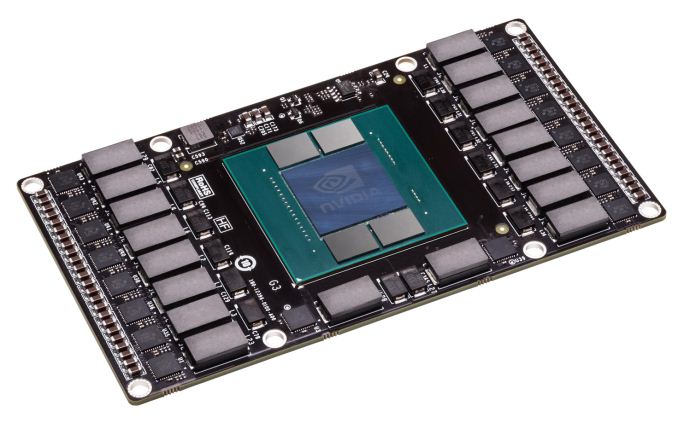

In the process AMD has also confirmed that these HBM-equipped GPUs will allow them to experiment with new form factors. By placing the memory on the same package as the GPU, AMD will be able to save space and produce smaller cards, which will allow them to produce designs other than the traditional large 10”+ cards that are typical of high-end video cards. AMD competitor NVIDIA has been working on HBM as well and has already shown off a test vehicle for one such card design, so we have reason to expect that AMD will be capable of something similar.

With apologies to AMD: NVIDIA’s Pascal Test Vehicle, An Example Of A Smaller, Non-Traditional Video Card Design

Finally, while talking about HBM on GPUs, AMD is also strongly hinting that they intend to bring HBM to other products as well. Given their product portfolio, we consider this to be a pretty transparent hint that the company wants to build HBM-equipped APUs. AMD’s APUs have traditionally struggled to reach peak performance due to their lack of memory bandwidth – 128-bit DDR3 only goes so far – so HBM would be a natural extension to APUs.

146 Comments

View All Comments

testbug00 - Thursday, May 7, 2015 - link

Sorry, but, your issue with AMD is what BS they say? Interesting. Never heard any of their competitors spew BS, or, outright lie about things to consumers... Oh... Wait...chizow - Thursday, May 7, 2015 - link

@testbug00AMD has a LONG history of downplaying and outright talking shit about their competitor's solutions, when it suits them or they don't have a competitive solution. Start with PhysX. Or CUDA. Or GameWorks. Or 3D Vision. Or G-Sync. Its the same BS from AMD, we don't like closed, proprietary, we love Open. Their solution will fail becaused its closed. And what do you get in return? Nothing! Empty promises, half-assed, half-baked, half-supported solutions that no one cares about. Open/Bullet Physics. OpenCL. GamingEvolved/TressFX. HD3D. FreeSync. Abandon/Vaporware.

I know AMD fanboys like you will repeatedly cite the 970 VRAM issue, but again, the net result had no negative impact on the consumer. The 970, despite the paper spec restatement, is still the same outstanding value and performer it was the day it destroyed AMD's entire product stack. Unlike AMD who repeatedly overpromises and underdelivers. FreeSync is just the latest addition to this long list of examples. Oh wait it isn't really free, new panels cost a lot more. Oh wait you do need new hardware. Oh wait old panels can't be just firmware flashed. Oh wait there's a minimum refresh rate. Oh wait outside of a limited window, its actually worst than non-Vsync. Oh wait FreeSync disables VRR. Oh wait it doesn't work with CrossFire.

Yet AMD this whole time, kept saying FreeSync would be better because it didn't require any "unnecessary" proprietary hardware or licensing fee, and would actually work better than G-Sync. Just a bunch of lies and nonsense, FreeSync is in disarray as a half-assed, half-broken situation, but coming from AMD, why would anyone expect anything less?

Enderzt - Thursday, May 7, 2015 - link

What are you going on about?I am not a specific fan of either team but it's crazy that you are talking about AMD as being the dishonest company when Nvidia is currently still suffering from the 3.5 gig 970 debacle. Talk about being disingenuous to your user base. Pretending Nvidia is superior to AMD in-terms of honesty is laughable.

And what do you mean by FreeSync BS/run-up? They are offering an industry standard solution for adaptive monitor refresh rates that requires no license fee or proprietary display scaler like G-sync. This is an open VESA standard. I don't really understand how you could think AMD is the bad guy in this market when Nvidia is the one charging you 300 extra dollars for a monitor with the same features and less functionality. G-sync displays only have a single display-port input and the monitor company needs to add a completely separate scaler module if they wanted to add HDMI/DVI/VGA inputs, which again further raises the price of these monitors.

So AMD goes open source with their refresh rate solution that will benefit the widest gamer audience with the least expense and their the dishonest bullshit company. Your arguments just don't hold much weight and pretty obviously come from a biased view point.

Does Nvidias top of the line cards currently out perform AMD? Yeah can't really argue with facts and numbers. Is the Nvidia experience great? Hell yeah! G-sync kicks ass and the cards work well. But pretending they are better top to bottom, across all field, is a pretty ridiculous statement. There are exceptions to every rule and AMD has a nice fit in the price to performance market. And according to what we know about this next gen card release they will soon be competitive a the top of the line as well.

chizow - Thursday, May 7, 2015 - link

@Enderzt, I won't repost everything just read my reply above. Same applies here.You aren't getting the same features and less functionality, you're getting a half-baked, half-assed solution with a ton of asterisks and glaring flaws, but as an AMD user and fan I can tell it will be hard for you to even notice.

But yeah, that $300 cheaper monitor looks to be cheaper for a reason, its just not very good.

http://www.tftcentral.co.uk/reviews/acer_xg270hu.h...

"From a monitor point of view the use of FreeSync creates a problem at the moment on the XG270HU at the moment, just as it had on the BenQ XL2730Z we tested recently. The issue is that the OD (overdrive) setting does nothing when you connect the screen over DisplayPort to a FreeSync system. "

Looks like a recurring theme for AMD FreeSync monitors, I'm not sure how anyone can claim these technologies are even close to equivalent.

Also, if you want to get into really deceptive behavior an AMD's part, you can rewind to driver-gate where AMD was seeding press with overclocked/custom-BIOS 290/X to make them look and perform better. You can also look at their attempts to deny any problems with CF and runtframes, until PCPer made it clearly obvious and ultimately forced AMD to revisit and fix their CF framepacing.

P39Airacobra - Wednesday, June 3, 2015 - link

Really? And Nvidia was so honest out the 970! Yes you are still a fanboy! Lay off the fluoride, And cut down on your vaccines!01189998819991197253 - Friday, May 8, 2015 - link

@ ZugguratThat was a good choice on your HTPC GPU. Nvidia has some pretty serious HDMI handshake issues, which makes using an Nvidia GPU in an HTPC incredibly annoying. I've got Nvidia in my desktop and wish I could use it in my HTPC, but having to reboot or restart the graphics drivers whenever the TV or receiver is turned off is a deal breaker for me.

This issue has persisted for years now and it looks to me like Nvidia just doesn't care. So I'm stuck with AMD in my HTPC because Nvidia is too cheap and lazy to get the HDMI handshake right.

chizow - Friday, May 8, 2015 - link

You must have a really old or low quality receiver, I haven't had any issues with this on 2 receivers, most recently a Yamaha VSX-677 that has standby HDMI passthrough. My old Sony STR DG1000 didn't have issues with this either.Nvidia does have some HDMI issues, but only because they explicitly honor what is in the mfg's INF files. If mfg's did a better job of forming their INF files, these issues wouldn't be as prevalent.

Mr Perfect - Thursday, May 7, 2015 - link

Because humans are emotional beings, Mr. Spock.Seriously though, I'm with you. Whoever builds the best card at the time I'm buying gets the sale.

Zefeh - Wednesday, May 6, 2015 - link

But when you take head to head comparisons of GPU's AMD released in 2013 and compare them to the current line up of that Nvidia has launched this year its kinda stupid how little preformance increase it is.I plugged in all the fps values from the anandtech bench comparison between the 290X vs the GTX 980. I computed the percentage increase in FPS of the 980 over the 290X then summed these and divided(by 40 test values) to gather the average performance gain in FPS in percentage.

Guess what - The GTX980 has on average 12.85% more FPS than the 290X. 13% more FPS at 83% cost increase. That, is very bad. The reason why Nvidia has more market share isn't because they have a better product, its because their marketing is MUCH better than AMDs. Hard numbers are startling.

chizow - Wednesday, May 6, 2015 - link

@Zefeh, at face value what you say is true, however, if you look historically and the fact we are stuck on the same 28nm process node, the advancements made this generation and Maxwell in particular are frankly stunning.Again, the key thing to remember is that we're on the same process here, to be able to increase performance nearly 2x (780Ti to Titan X or GTX 680 to GTX 980) on the same process node without the benefit of a simple doubling of transistors, while also simultaneously reducing or maintaining TDP is nothing short of miraculous.

Now, once you put that into context, and see we weren't even able to manage that with BOTH a new process node AND new architectures, you can see why 28nm will actually be remembered as a rather significant node (at least for Nvidia). They've increased performance something like five-fold (GTX 480 to GTX Titan X) on just a single process node (40nm to 28nm).

Again, you can claim all you want that the only reason Nvidia has greater marketshare is because of marketing, but I can quite easily rattle off about a dozen of their technologies that I enjoy that make their product better than AMD. No marketing in the world is going to make up for that gap in actual features and end-user experience, sorry.