Understanding Camera Optics & Smartphone Camera Trends, A Presentation by Brian Klug

by Brian Klug on February 22, 2013 5:04 PM EST- Posted in

- Smartphones

- camera

- Android

- Mobile

Recently I was asked to give a presentation about smartphone imaging and optics at a small industry event, and given my background I was more than willing to comply. At the time, there was no particular product or announcement that I crafted this presentation for, but I thought it worth sharing beyond just the event itself, especially in the recent context of the HTC One. The high level idea of the presentation was to provide a high level primer for both a discussion about camera optics and general smartphone imaging trends and catalyze some discussion.

For readers here I think this is a great primer for what the state of things looks like if you’re not paying super close attention to smartphone cameras, and also the imaging chain at a high level on a mobile device.

Some figures are from of the incredibly useful (never leaves my side in book form or PDF form) Field Guide to Geometrical Optics by John Greivenkamp, a few other are my own or from OmniVision or Wikipedia. I've put the slides into a gallery and gone through them pretty much individually, but if you want the PDF version, you can find it here.

Smartphone Imaging

The first two slides are entirely just background about myself and the site. I did my undergrad at the University of Arizona and obtained an Optical Sciences and Engineering bachelors doing the Optoelectronics track. I worked at a few relevant places as an undergrad intern for a few years, and made some THz gradient index lenses at the end. I think it’s a reasonable expectation that everyone who is a reader is also already familiar with AnandTech.

Next up are some definitions of optical terms. I think any discussion about cameras is impossible to have without at least introducing the index of refraction, wavelength, and optical power. I’m sticking very high level here. Numerical index refers of course to how much the speed of light is slowed down in a medium compared to vacuum, this is important for understanding refraction. Wavelength is of course easiest to explain by mentioning color, and optical power refers to how quickly a system converges or diverges an incoming ray of light. I’m also playing fast and loose when talking about magnification here, but again in the camera context it’s easier to explain this way.

Other good terms are F-number, the so called F-word of optics. Most of the time in the context of cameras we’re talking about working F-number, and the simplest explanation here is that this refers to the light collection ability of an optical system. F-number is defined as the ratio of the focal length to the diameter of the entrance pupil. In addition the normal progression for people who think about cameras is in square root two steps (full stops) which changes the light collection by a factor of two. Finally we have optical format or image sensor format, which is generally in some notation 1/x“ in units of inches. This is the standard format for giving a sensor size, but it doesn’t have anything to do with the actual size of the image circle, and rather traces its roots back to the diameter of a vidicon glass tube. This should be thought of as being analogous to the size class of TV or monitor, and changes from manufacturer to manufacturer, but they’re of the same class and roughly the same size. Also 1/2” would be a bigger sensor than 1/7".

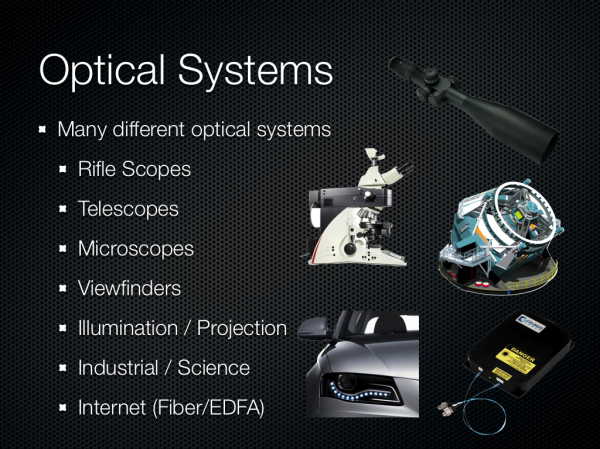

There are many different kinds of optical systems, and since I was originally asked just to talk about optics I wanted to underscore the broad variety of systems. Generally you can fit them into two different groups — those designed to be used with the eye, and those that aren’t. From there you get different categories based on application — projection, imaging, science, and so forth.

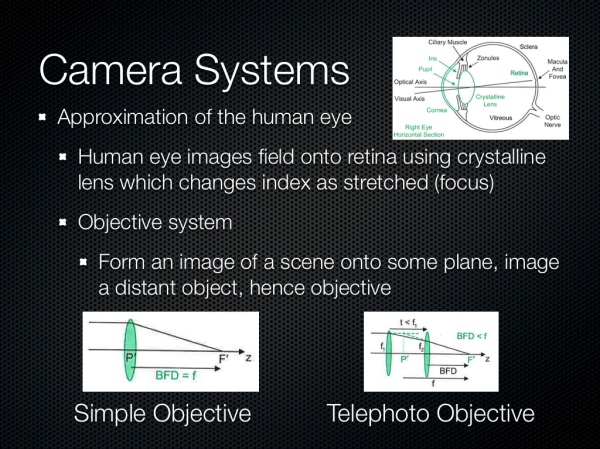

We’re talking about camera systems however, and thus objective systems. This is roughly an approximation of the human eye but instead of the retina the image is formed on a sensor of some kind. Cameras usually implement similar features to the eye as well – a focusing system, iris, then imaging plane.

60 Comments

View All Comments

mdar - Thursday, February 28, 2013 - link

You say "This is the standard format for giving a sensor size, but it doesn’t have anything to do with the actual size of the image circle, and rather traces its roots back to the diameter of a vidicon glass tube"The above statement, though partially true, is misleading. The dimension DOES give sensor size multiplied by factor of roughly 1.5. For example if some one says 1/1.8" sensor, the sensor diagonal is ~ 1/(1.8*1.5). The 1.5 factor probably comes from vidicon glass tube.

Infact if some one wants just one parameter for image quality, it should be sensor size. Pixel technologies do improve (like using BSI) but even now a 1/3" sensor size of iphone or samsung or lumia 920 camera can just barely match quality 1/1.8" sensor of 4-year old Nokia N8.

frakkel - Thursday, February 28, 2013 - link

I am currious if you can elaborate a little regarding lens material.You say that today most lens elements are made of plastic. Is this both for front and rear facing camera lenses?

I was under the impression that lens elements in phones still were made of glass but that the industry is looking to change to plastic but this change has not been done yet. Please correct me if I am wrong and a link or two would not hurt :)

vlad0 - Friday, March 1, 2013 - link

I suggest reading this white paper as well:http://www.mediafire.com/view/?0o5oo43h8os4ba9

it deals with a lot of the limitations of a smartphone camera in a very elegant way, and the results are sublime.

http://sdrv.ms/VQ3eCd

Nokia solved several important issues the industry has been dealing with for a long time...

wally626 - Monday, March 4, 2013 - link

Although the term Bokeh is commonly used to refer to the effect in pictures of low depth of field techniques it should only be used to refer to the quality of the out-of-focus regions of such photographs. It is much more an aesthetic term than technical. Camera phones usually have such deep depth of focus that little is out of focus in normal use. However, with the newer f/2, f/2.4 phone cameras when doing close focus you can get the out of focus regions from low depth of field.http://www.zeiss.com/c12567a8003b8b6f/embedtitelin...$file/cln35_bokeh_en.pdf

Is a very good discussion of this by Dr. Nasse of Zeiss

wally626 - Monday, March 4, 2013 - link

Someone fixed the Zeiss link to the Nassw article for me awhile back but I forgot the exact fix. In any case a search on the terms Zeiss, Nasse and Bokeh should bring up the article.huanghost - Thursday, March 21, 2013 - link

admirablemikeb_nz - Sunday, December 22, 2013 - link

how do i calculate or where do i find the field of view (angle of view) for smartphone and tablet cameras?thanks

oanta_william - Monday, July 20, 2015 - link

Your insight in Smartphone Cameras is awesome! Thanks for everything!From your experience would it be possible to have only the camera module on a device, with a micro-controller/SoC that has sufficient power ONLY for transmitting the non processed 'RAW' data on another device via Bluetooth - on which the ISP and the rest needed for image processing to be situated.

I have a homework regarding this. Do you now any reference material/books that could help me?

Thanks!

solarkraft - Monday, January 9, 2017 - link

What an amazing article! Finally something serious about smartphone imaging (the processor/phone makers don't tell us ****)! Just an updated version might be cool.albertjohn - Tuesday, November 20, 2018 - link

I like this concept. I visited your blog for the first time and became your fan. Keep posting as I am going to read it everyday.