Arm Announces Mobile Armv9 CPU Microarchitectures: Cortex-X2, Cortex-A710 & Cortex-A510

by Andrei Frumusanu on May 25, 2021 9:00 AM EST- Posted in

- SoCs

- CPUs

- Arm

- Smartphones

- Mobile

- Cortex

- ARMv9

- Cortex-X2

- Cortex-A710

- Cortex-A510

The Cortex-X2: More Performance, Deeper OoO

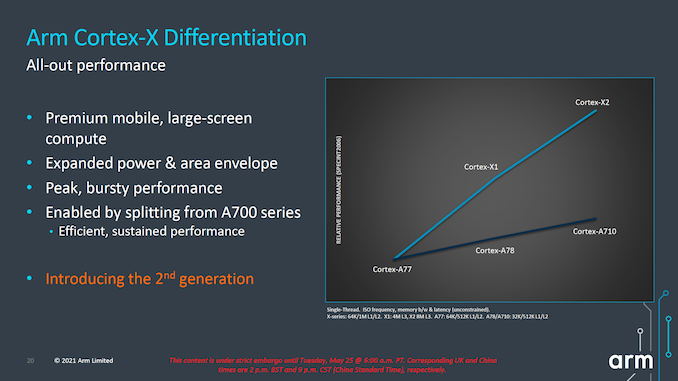

We first start off with the Cortex-X2, successor to last year’s Cortex-X1. The X1 marked the first in a new IP line-up from Arm which diverged its “big” core offering into two different IP lines, with the Cortex-A sibling continuing Arm’s original design philosophy of PPA, while the X-cores are allowed to grow in size and power in order to achieve much higher performance points.

The Cortex-X2 continues this philosophy, and further grows the performance and power gap between it and its “middle” sibling, the Cortex-A710. I also noticed that throughout Arm’s presentation there were a lot more mentions of having the Cortex-X2 being used in larger-screen compute devices and form-factors such as laptops, so it might very well be an indication of the company that some of its customers will be using the X2 more predominantly in such designs for this generation.

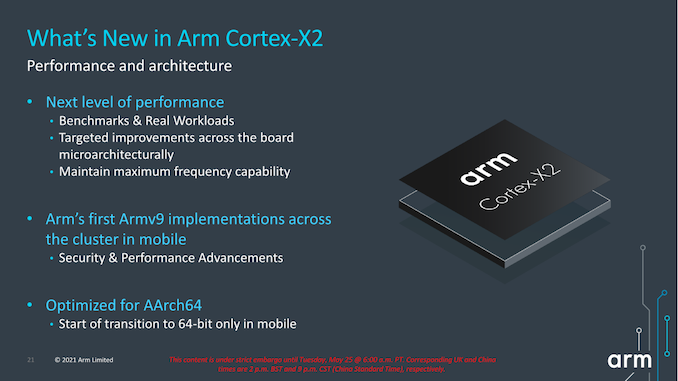

From an architectural standpoint the X2 is naturally different from the X1, thanks in large part to its support for Armv9 and all of the security and related ISA platform advancements that come with the new re-baselining of the architecture.

As noted in the introduction, the Cortex-X2 is also a 64-bit only core which only supports AArch64 execution, even in PL0 user mode applications. From a microarchitectural standpoint this is interesting as it means Arm will have been able to kick out some cruft in the design. However as the design is a continuation of the Austin family of processors, I do wonder if we’ll see more benefits of this deprecation in future “clean-sheet” big cores designs, where AArch64-only was designed from the get-go. This, in fact, is something that's already happening in other members of Arm's CPU cores, as the new little core Cortex-A510 was designed sans-AArch32.

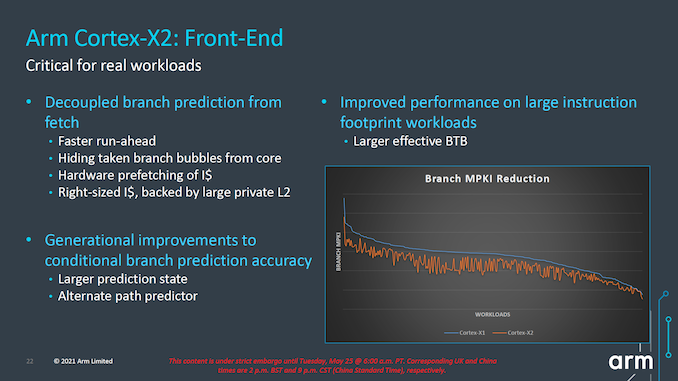

Starting off with the front-end, in general, Arm has continued to try to improve what it considers the most important aspect of the microarchitecture: branch prediction. This includes continuing to run the branch resolution in a decoupled way from the fetch stages in order to being able to have these functional blocks be able to run ahead of the rest of the core in case of mispredicts and minimize branch bubbles. Arm generally doesn’t like to talk too much details about what exactly they’ve changed here in terms of their predictors, but promises a notable improvement in terms of branch prediction accuracy for the new X2 and A710 cores, effectively reducing the MPKI (Misses per kilo instructions) metric for a very wide range of workloads.

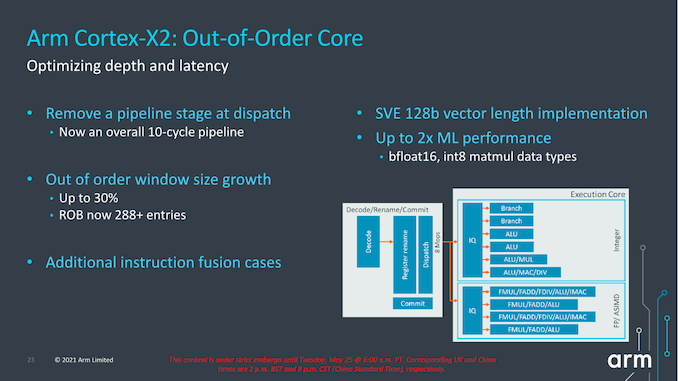

The new core overall reduces its pipeline length from 11 cycles to 10 cycles as Arm has been able to reduce the dispatch stages from 2-cycles to 1-cycle. It’s to be noted that we have to differentiate the pipeline cycles from the mispredict penalties, the latter had already been reduced to 10 cycles in most circumstances in the Cortex-A77 design. Removing a pipeline stage is generally a rather large change, particularly given Arm’s target of maintaining frequency capabilities of the core. This design change did incur some more complex engineering and had area and power costs; but despite that, as Arm explains in, cutting a pipeline stage still offered a larger return-on-investment when it came to the performance benefits, and was thus very much worth it.

The core also increases its out-of-order capabilities, increasing the ROB (reorder buffer) by 30% from 224 entries to 288 entries this generation. The effective figure is actually a little bit higher still, as in cases of compression and instruction bundling there are essentially more than 288 entries being stored. Arm says there’s also more instruction fusion cases being facilitated this generation.

On the back-end of the core, the big new change is on the part of the FP/ASIMD pipelines which are now SVE2-capable. In the mobile space, the SVE vector length will continue to be 128b and essentially the new X2 core features similar throughput characteristics to the X1’s 4x FP/NEON pipelines. The choice of 128b vectors instead of something higher is due to the requirement to have homogenous architectural feature-sets amongst big.LITTLE designs as you cannot mix different vector length microarchitectures in the same SoC in a seamless fashion.

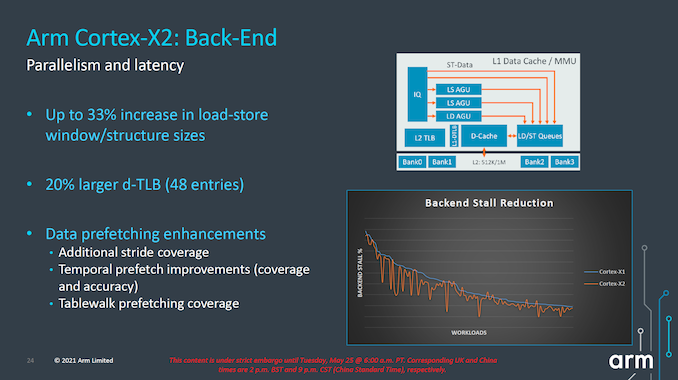

On the back-end, the Cortex-X2 continues to focus on increasing MLP (memory level parallelism) by increasing the load-store windows and structure sizes by 33%. Arm here employs several structures and generally doesn’t go into detail about exactly which queues have been extended, but once we get our hands on X2 systems we’ll be likely be able to measure this. The L1 dTLB has grown from 40 entries to 48 entries, and as with every generation, Arm has also improved their prefetchers, increasing accuracies and coverage.

One prefetcher that surprised us in the Cortex-X1 and A78 earlier this year when we first tested new generation devices was a temporal prefetcher – the first of its kind that we’re aware of in the industry. This is able to latch onto arbitrary repeated memory patterns and recognize new iterations in memory accesses, being able to smartly prefetch the whole pattern up to a certain depth (we estimate a 32-64MB window). Arm states that this coverage is now further increased, as well as the accuracy – though again the details we’ll only able to see once we get our hands on silicon.

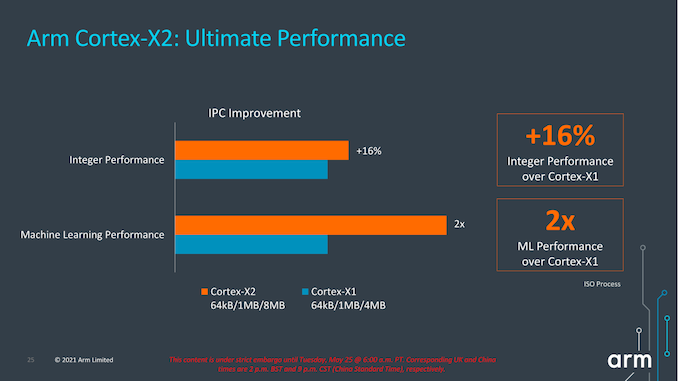

In terms of IPC improvements, this year’s figures are quoted to reach +16% in SPECint2006 at ISO frequency. The issue with this metric (and which applies to all of Arm’s figures today) is that Arm is comparing an 8MB L3 cache design to a 4MB L3 design, so I expect a larger chunk of that +16% figure to be due to the larger cache rather than the core IPC improvements themselves.

For their part, Arm is reiterating that they're expecting 8MB L3 designs for next year’s X2 SoCs – and thus this +16% figure is realistic and is what users should see in actual implementations. But with that said, we had the same discussion last year in regards to Arm expecting 8MB L3 caches for X1 SoCs, which didn't happen for either the Exynos 2100 nor the Snapdragon 888. So we'll just have to wait and see what cache sizes the flagship commercial SoCs end up going with.

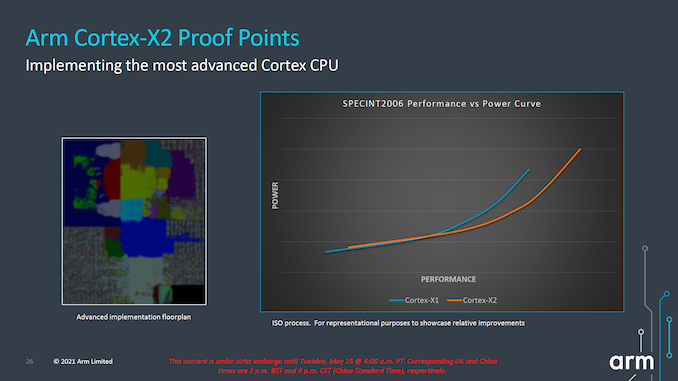

In terms of the performance and power curve, the new X2 core extends itself ahead of the X1 curve in both metrics. The +16% performance figure in terms of the peak performance points, though it does come at a cost of higher power consumption.

Generally, this is a bit worrying in context of what we’re seeing in the market right now when it comes to process node choices from vendors. We’ve seen that Samsung’s 5LPE node used by Qualcomm and S.LSI in the Snapdragon 888 and Exynos 2100 has under-delivered in terms of performance and power efficiency, and I generally consider both big cores' power consumption to be at a higher bound limit when it comes to thermals. I expect Qualcomm to stick with Samsung foundry in the next generation, so I am admittedly pessimistic in regards to power improvements in whichever node the next flagship SoCs come in (be it 5LPP or 4LPP). It could well be plausible that we wouldn’t see the full +16% improvement in actual SoCs next year.

181 Comments

View All Comments

mode_13h - Tuesday, June 1, 2021 - link

> some CPUs did mark the instruction boundaries in the cache.Not surprising, other than how far back you say it went.

mode_13h - Wednesday, May 26, 2021 - link

All good points. People who think "ISA doesn't matter" don't really understand everything an ISA encompasses.GeoffreyA - Wednesday, May 26, 2021 - link

The difference that divides them is that CISC can include a memory operation as part of an arithmetic one, whereas in RISC the two are separate (at least, in a load-store architecture).mode_13h - Wednesday, May 26, 2021 - link

> The difference that divides them is that CISC can include a memory operationI'm not an expert on the subject, but there are other elements in RISC orthodoxy, concerning things like:

* number of operands (also number of src & dst operands)

* encoding of immediates

* side-effects

I view the whole subject of CISC vs. RISC as something like MMA (Mixed Martial Arts). It turns out that there's no single best classical martial arts style. The most effective fighters use a blend of techniques adopted from various, disparate fighting styles.

GeoffreyA - Thursday, May 27, 2021 - link

"The most effective fighters use a blend of techniques"Absolutely. It's almost a universal principle that the winning design puts together the best elements from competing designs and throws away the junk.

Thala - Tuesday, May 25, 2021 - link

It does not matter that they are RISC-like inside, the issue with x86 is that they still carry the typical CISC baggage - and no internal RISC-like structure will help them here.km1810vm4 - Wednesday, May 26, 2021 - link

I would say x86 baggage. There were much nicer CISC architectures around at the time, like the Motorola 68000.TheinsanegamerN - Wednesday, May 26, 2021 - link

Intel's p5 processors implemented RISC style micro ops into their x86 decoding, that's part of why they were so dramatically faster then 486. It's not like this is hard to find out....mode_13h - Thursday, May 27, 2021 - link

> Intel's p5 processors implemented RISC style micro ops into their x86 decodingIn fact, early CPUs used a lot of microcode, and one of the ways they got faster was by replacing microcoded operations with hardwired logic. This was enabled by ever-increasing transistor budgets.

> that's part of why they were so dramatically faster then 486.

This feels like a bit of revisionist history. Here are some of the reasons why Pentium was faster than 80486:

https://en.wikipedia.org/wiki/P5_(microarchitectur...

Kamen Rider Blade - Tuesday, May 25, 2021 - link

Android needs to get their developers to stop using Java and use C/C++/Rust for their apps to eek out the max performance possible.Apple's App code base is generally C/C++, that's why they have the performance that they have.

And it's a long time nagging issue that I wish the Android community would solve.

It would give Android a HUGE performance boost to move their Apps over to C/C++/Rust.

Programming Languages have already been benchmarked against each other to see which one's faster and sorry to say it, but the C/C++/Rust family win the day in terms of Code Speed while using the same hardware platform.

https://benchmarksgame-team.pages.debian.net/bench...