Intel Xe Graphics: An Interview with VP Lisa Pearce

by Dr. Ian Cutress on November 11, 2020 2:00 PM EST

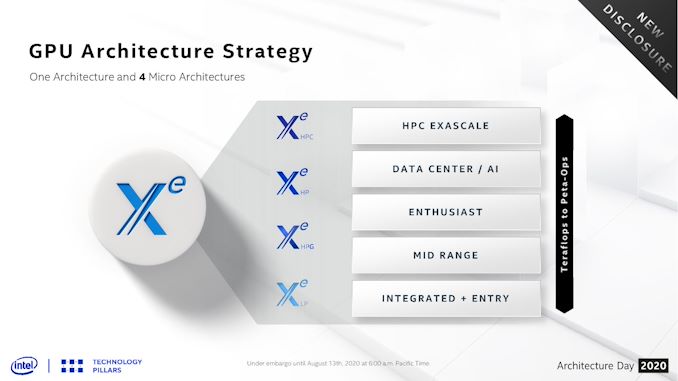

Bringing a new range of hardware to market is not an easy task, even for Intel. The company has an install base of its ‘Gen’ graphics in hundreds of millions of devices around the world, however the range of use is limited, and it doesn’t tackle every market. This is why Intel started to create its ‘Xe’ graphics portfolio. The new graphics design isn’t just a single microarchitecture – by mixing and matching units where needed, Intel has identified four configurations that target key markets in its sights, ranging from the TeraFLOPs needed at the low-end up to Peta-OPs for high performance computing.

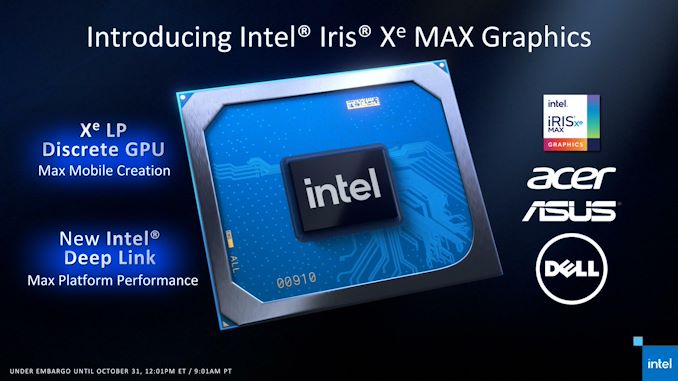

These four segments start with Xe-LP (Low Power) for integrated and low-range use, Xe-HPG (High Performance Gaming) for the gaming market, Xe-HP (High Performance) for the enterprise market, and Xe-HPC (High Performance Computing) targeting supercomputers. We already have Xe-LP in the market today with Intel’s Tiger Lake mobile solutions, as well as Iris Xe Max discrete laptop graphics, expected to also come to desktop soon – the other three are in various stages of development, using a variety of process node technologies and the leading-edge of Intel’s packaging expertise.

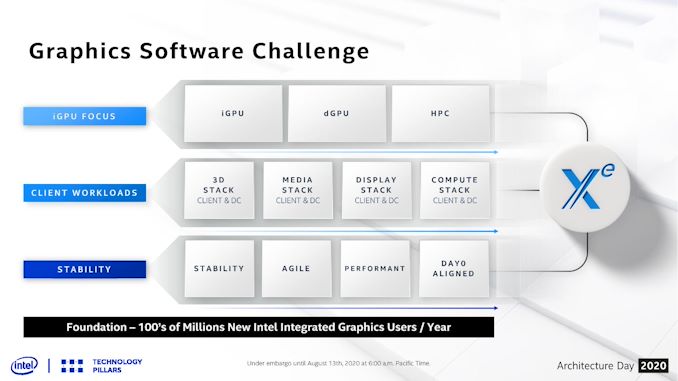

Alongside all the hardware aspects come two key elements that must never be forgotton: drivers and software. With tens of millions of developers that are used to Gen-style optimizations, migrating to an Xe-style of thinking takes time, as well as hurdles to adoption, and so a driver and software strategy is key to greasing the wheels of the machine. Gaming performance would be nowhere without the right driver stack, or in-game optimizations for rendering and effects. Without the right driver or software approach, compute performance for key server and HPC markets would be confined to the most hardcore of bare-metal programmers.

Dr. Ian Cutress AnandTech @IanCutress |

Lisa Pearce Intel @gfxlisa |

Leading up the charge on the driver and software side of the equation is Intel’s Lisa Pearce. Lisa is a 23 year veteran of Intel, consistently involved in Intel’s driver application and optimization, collaboration with independent software vendors, and Lisa now stands as the Director and VP of Intel’s Architecture, Graphics and Software Group, and Director of Visual Technologies. Everything under that bucket of Intel’s Graphics strategy as it pertains to drivers and software, all the way from integrated graphics through gaming and enterprise into high-performance, is in Lisa’s control.

This includes Intel’s oneAPI strategy, as Intel attempts to combine all of its programmable hardware under one software ecosystem that can disaggregate the programming model from the hardware through abstraction and pre-optimized libraries. This includes Level Zero, a direct-to-metal interface for XPUs (GPU, FPGA, anything that isn’t a CPU), compute acceleration, and an effort to enable cross-operating system code re-use to streamline efforts and performance parity for both client gaming and enterprise workflows.

In our interview, I was afforded an opportunity to quiz Lisa on Intel’s approach. Trying to put everything under one umbrella is a big task, especially when each little niche has its own foibles. Gaming is obviously a big part of our audience, so we also covered Intel’s increased strategy for a streamlined gaming experience when the big hardware is ready. This will come across as more of a holistic view than a deep dive into how specific cogs of the machine work, especially with Lisa’s wide remit and the fact that Xe to the masses is still very much a work in progress.

A video recording of the interview is provided at the bottom of the page.

Ian Cutress: From a gamer’s perspective, we already have some software strategy with the Xe-LP integrated graphics and the new Iris Xe MAX discrete GPU – what does Intel’s software and driver strategy look like as we move into the bigger discrete options and high performance compute?

Lisa Pierce: We’ve been spending two decades on integrated graphics. We’re getting to the point where stability is there, we feel like we have a strong driver base, and now it’s about running fast on performance, the agility on releases. You saw a lot of that in the last year with day-zero drivers for the first time, we want to get to a pace where we’re consistently releasing and striking that balance between users who want stability and users that want to run fast the new game off the shelf. A lot of the capabilities you can see, like Instant Game Tuning, Day-0 driver releases, we are getting that engine running that we know is critical for client gamers especially. I think that you will only see that increase in the next year. You’ll see driver releases for games earlier, tuning earlier, and then of course server is a whole different ballgame.

IC: As we move from a Gen ecosystem to an Xe ecosystem, is software now truly a first class citizen of Intel’s strategy?

LP: Oh absolutely. It’s a major change for us – software first. We try and have that driver base that looks across [the industry], and you want to start with the workloads of what you’re seeing, and that drives your plans for the future. It’s a much different mindset, a software-first mindset.

IC: You mentioned day-zero (day-0) game support, which is something we’re starting to experience moving from Gen to the new Xe. Can you talk us through how that strategy is going to evolve as it relates to gaming and driver updates – is Intel committed to a regular driver update schedule?

LP: The driver update schedule is an interesting one! So I'll tell you our blunt mindset which is to release when you have something to release! So I don't know if we will do something where it's just on a fixed cadence. We tend [It tends] to be pretty often that we're posting [updates], but we want to post with purpose - we don't want to just post just because it's the time. So we try to make sure we bring quality drivers, optimal drivers. We built an engine so that we can release anytime we can, right? The cadence is there. That was a major change that we made in the last year and a half. For us we started with more frequent releases, then you want good functionality, good tuning. And at some point, you want to be able to say [if we] can decouple some of my tuning from my driver delivery, which is now Intel’s Instant Game Tuning. We built that out piece by piece, and ultimately we built the engine so we can [enable] releases any [time] we need it.

IC: If I can offer one suggestion – driver updates [numbers] are sometimes difficult to follow on which date is which!

LP: Absolutely! Interesting you bring that up! We did almost change that, but we held back. So I’m curious [you mention it], it’s very good to know if it’s March, April, May. So we’re working on that. I can say that one of the things you might notice is that we have a completely chronological build order – we have one trunk and we’re releasing from that, so you will always know it’s an ever-increasing driver base. We don’t have old branches or point fixes. But we do want to make it easier to know what month, what timeline that is. Good input! It’s definitely been on the mind. I think the challenge is that our integrated graphics space is very tied to our current numbering system, and so that’s what we’ve been trying to work through.

IC: Speaking of the integrated graphics – we have Gen graphics in hundreds of millions of devices, where developers are very familiar with the architecture and the tools. How much of a paradigm shift is it going to be to move from a Gen [ecosystem] to an Xe ecosystem? How involved is Intel going to be when it comes to teaching developers how to optimize for the new hardware?

LP: As you look at it, it’s still a GPU. So from a Windows aspect, the APIs are still similar. I think for the developer, it’s more about local memory tuning, or when we have a Deep Link scenario (where the CPU and GPU work together), that’s going to be guidance. But still, the developers are generally looking at a GPU and the APIs they’ve always seen. Underneath that looks very different. We expect to see a lot of consistency [for developer tools] as they move over to Xe and there is a very strong engagement with the application vendors and developers on tuning. There is also balance when you have multiple GPUs in the system.

IC: You’ve mentioned recently about conversions from CUDA and OpenCL through to Xe and oneAPI – is that going to be a learning process as well?

LP: I would expect it to be! The best person to speak to that is Jeff (McVeigh), because he is in the middle of that porting. We kind of see it as it comes down to us at level zero, and to see the benefit of looking at the back-end of it, which is the beautiful part of being in the driver space. Jeff is focused on how we are working there.

IC: The idea is to make sure that everyone is catered for, regardless of GPU, XPU?

LP: Correct. We want to make it easier out of the gate to get applications over to oneAPI. Then obviously you can do more tuning, a kind of deeper touch into a certain XPU if you wanted to tune it right, but coming out of the gate [porting to oneAPI] shouldn’t be such a heavy lift.

IC: For users who haven’t heard of oneAPI before, can you give a brief overview of what oneAPI is and what Intel’s vision for it?

LP: Ultimately we want to have one platform that you can say is the contract for developers [between software and hardware]. It’s something that can be relied upon to have consistent interfaces, and is consistently evolving with tools and compatibility to work across the different areas of heterogeneous compute. It’s trying to be a complete suite that’s not one specific hardware vendor or one class [of hardware]. It’s not one for narrow usage, it really is a critical framework for developers over the years on XPUs.

IC: So develop your code, then compile for the target, and you can change targets at will – that’s the ultimate goal?

LP: The ultimate goal, absolutely.

IC: With Xe, we know that there are going to be four variations: LP, HPG, HP and HPC. How much opportunity is there for synergy between the driver stacks and the development tools between the four?

LP: The driver stacks is one driver team, and then we have one code base across all of that. So it’s not separate teams, it’s not separate code bases, there will be a consistent underlying plumbing for one driver base. Now there are developer tools above that, and we’re trying to have one consistent aspect of developer tools with a GPU. Obviously there are some unique things when you look at a pure compute versus a pure rendering usage. So there will be some catering of the tools required based on each of those, and then we have additional oneAPI toolkits. But there is no separate team. Our [approach to] compute is consistent across from Integrated all the way through to HPC. There will obviously be some changes in kernel drivers, in other parts of the tuning or tools, but the [underlying] fundamental IP is the same.

IC: One of the things that Intel has mentioned is the plan to develop its open source Linux driver stack. How will this work with expected rollout timelines – should we expect immediate day zero support for the hardware in both Windows and Linux, or are there other factors involved?

LP: Our goal, first on client, is that for every PRQ (Production Release Qualification) for our hardware, we have a PV (Production Version) for our driver in both our Windows and Linux stack, with Linux enabled in open source. Now how much we can achieve the same performance up front [on day zero] is the next challenge. We have Tiger Lake, and the Linux PV driver was right after the Windows one. Now we expect that also the tuning and performance, local memory tuning, is also right there. That’s what we expect going forward.

IC: One of the criticisms with current driver stacks from Intel and AMD is that the size of the driver downloads are getting ridiculous – upwards of 300-400+ megabytes due to bundled software and the fact that you’re supporting 7/8/9 generations of GPUs with one download. Is there anything you can do to make this process more streamlined in future?

LP: We’re looking at it! If you look at the full package size, we keep a watch across us and others on the total size – I think one of the issues is the one monolithic package. So we are looking at having partial driver binaries in the future. I can’t say we have an explicit time line yet, but we are looking into that. At the same time we’re chanign our installers, so you’ll see that kind of later this year or early next year as our installer interface was needing some updates. That will be coming very soon, but hopefully also not just having a full monolithic driver download and just getting the pieces you need.

IC: All of this ties into Intel’s Graphics Control Center (IGCC). There are some environments where IGCC can’t be used, such as in Linux or in a Cyber Café. This is also the vehicle in how you provide day-0 game updates. Is there a plan to enable those updates for users that don’t have IGCC – are you planning to do independent updates or downloads for those sorts of users?

LP: We’ve been trying to consider more cases where it’s easy to go to that. I’m not sure if [the answer] is outside IGCC or inside, but we’ve been trying to look into that. Definitely for any kind of Windows use, we target IGCC. For Linux, we really need to decide on a path, and we’ve been kind of polling the community on what’s most interesting [to them]. It has been asked for a while, and I’ve always wanted to do something for Linux there – so I think it may be a more simplified version, with some different dynamics for Linux and what you want in the UI, maybe how games are handled, and we’ll be taking a look at that next year.

IC: Intel’s competitors in the graphics space have their own suites of gaming features that the work with developers to accelerate things like tessellation, hair, even ray tracing – just optimized code bases and libraries. They get joined promotion as a result. What is Intel’s strategy here – is Intel going to start developing its own code packages to help with games, or is it going to push for more standardized support and libraries based on industry standards?

LP: The general methodology would be to always start with consistent APIs and standards. Of course we are always going to look at if there’s a reason that [performance] is holding something back in particular assets. But we will start with the industry standard APIs and packages for sure.

IC: As we move into 2021, we’re going to start talking about discrete graphics options for gaming and client beyond Iris Xe MAX. Can you talk to how Intel is integrating with game studios that are planning to launch AAA games and whether it is working with performance optimizations in the driver stack to deal with that?

LP: We are trying to engage earlier and earlier, especially into the engine space, and there are active discussions there about how much optimization we could put up front. So we’re just pushing into that space. First it starts with having an optimal high-end GPU, and you can go build more momentum from there. It is a heavy focus!

IC: We have Tiger Lake in the market, as well as Iris Xe MAX, and then HP, HPG and HPC coming through 2021 and 2022. Some of these deadlines are fast approaching – would you say that your team is sweating a little to ensure seamless launches?

LP: My people say they’re always sweating a little! There is no time for rest, and it’s an extremely exciting time. We are very eager, very excited, and yes it is intense. We love it that way – we love the challenge!

IC: One current line of thinking is that when buying a graphics card it might be used for gaming, or maybe it will be used for developing compute software to run alongside. Should a user want to, are there opportunities for a user today to program GD1 for compute, can they just pull the oneAPI stack and just go and use that for a compute task rather than a gaming focused one?

LP: Absolutely. It’s the reason we put Level Zero in our Windows driver as well, it’s been in the driver since March or early April.

IC: What exactly is Level Zero?

LP: Level Zero is our hardware abstraction layer for XPUs. The first one is for the GPU, and Level Zero is a specification not intended solely for Intel products. You can see it in open source repositories, all the fine grained controls for GPUs, FPGAs, and others. It’s a consistent hardware abstraction layer across the operating systems and it is the backbone for oneAPI at the device layer.

IC: We are seeing some use cases where users will have multiple GPUs in a system, perhaps even multiple GPUs from different vendors, for use as accelerators in gaming or as accelerators in compute. How does Intel consider this approach – will the drivers play nicely with other vendors? Is this part of your testing for every driver release?

LP: Yes. It already plays nice in some degree, as we have had our drivers paired with others on mobile systems for some time. Obviously we are increasing that testing with discrete graphics as well. This also comes in an Intel plus Intel combo, not just other combinations, and you can see some of that with Intel’s Deep Link as well as an Intel plus Intel combination.

IC: [With everything that Intel has to get ready,] We don’t expect the first discrete hardware launches from Intel to necessarily be VR focused, but how are you looking at that market – have these products been built with a VR focus in mind, as it’s also a professional use case? How is Intel approaching its strategy in order to enable developers in VR?

LP: There has been a strong investment on VR [at Intel]. I think now that as we get into higher performance GPUs, [VR] will absolutely be a target for next year.

IC: Another one of Intel’s key targets is going to be cloud gaming, as well as cloud compute. What would be the Intel plus-point for customers looking to buy that sort of solution? What can Intel bring to the table, whether it comes to virtualization or security? How is Intel aligning with large CSP customers?

LP: We have the broad software stack, enabling container stacks, the security that we would have on client. The same touch as what we have with firmware updates, DRM, security. So you should expect us to have a full stack and to optimize that full stack. I think that as we go and push through that, people will see the performance that we can achieve there, and it’s really taking that hardening we have had from previous generations and bringing it to CSPs. But the software enabling you’ve seen, and how we’re doing releases for Linux distros, we will continue to build out and that’s a key aspect for them.

IC: Is there something that you want the public to know that necessarily hasn’t been focused on with regards to the driver approach and Intel’s strategy?

LP: I think that the thing that we would want everyone to know is that we are preparing for the scale that is in front of us with our eyes wide open. We are coming from a strong base, having done integrated graphics for two decades. We are eager to go and unleash that in all these different segments, and we are more excited than ever. We know it won’t be easy, and we know the challenges ahead. We know where we’re coming from, and we just can’t wait to show the hard work that’s been happening inside Intel’s walls.

IC: There’s an Intel Odyssey (Intel gaming consumer engagement) backpack behind you! The whole Odyssey train was fast paced for a while, but has slowed through Tiger Lake launch. Do you know what is next for the whole Odyssey plan?

LP: Yes! Stay tuned! I was just talking about it yesterday. I think you’ll definitely hear more very soon. We’ve been hard at work in the last year, with Tiger Lake which is an amazing product and DG1 right there with it, which is Iris Xe MAX. So you know, we’ve been very heads-down and you will hear more about Odyssey very soon.

Many thanks to Lisa and the Intel team for their time.

34 Comments

View All Comments

Arbie - Thursday, November 19, 2020 - link

Even more reason to wear a mask.sandeep_r_89 - Saturday, November 14, 2020 - link

Intel's provided day zero support for Linux, for about a decade now........Buck Turgidson - Sunday, November 15, 2020 - link

Oh dear, this interview did little to assuage my concerns that Intel just doesn’t get the GPU market at all (or it’s competitive landscape). I’m not a game developer, but I do deal with GPGPU programming (CUDA and OpenCL), and compared to Nvidia (and to a somewhat lesser extent, AMD), Intel just doesn’t seem to grasp that GPU isn’t about positioning and segmentation: that’s a natural aspect of computing that occurs once you’ve illustrated you have something amazing at the top end. GPU is always, as a starting point, about the top-end, whether that’s for compute or gaming. Why do I invest in understanding Nvidia’s proprietary CUDA? Why does my personal machine have a big honking RTX card? Why does my work machine have a Titan in it? Because Nvidia’s stuff actually delivers; the compute gains are real and demonstrable, whether it be for all up compute or for my own hobby projects using my Jetson Nano. Across that spectrum, it starts with commitment, and that means the top-end, and it means gaming and visuals, since that workload illustrates performance (even to me, and I’m not a big gamer).So if Intel wants to be taken seriously, show some real commitment to this space. I want to see a serious high-end part that illustrates why I should give a crap about your architecture. No more half-assing it with repurposing ancient, early 90’s derived x86 uarchs, no more starting with low-end “price-performance” ratios. They need to deliver some shock and awe.

BTW, To Intel, Re: CPU market: Apple just delivered some shock and awe on the mobile PC front, and AMD has been embarrassing you for the last 18 months. You folks at Intel had better have something to replace these never ending (and increasingly uncompetitive) Skyake uarch derivatives. You need a modern day Nehalem, and you need it fast.

Arbie - Thursday, November 19, 2020 - link

"Bringing a new range of hardware to market is not an easy task, even for Intel."Wordy. More concise is:

"Bringing hardware to market is not easy for Intel".