Imagination Announces B-Series GPU IP: Scaling up with Multi-GPU

by Andrei Frumusanu on October 13, 2020 4:00 AM EST- Posted in

- GPUs

- Imagination Technologies

- SoCs

- IP

It’s almost been a year since Imagination had announced its brand-new A-series GPU IP, a release which at the time the company called its most important in 15 years. The new architecture indeed marked some significant updates to the company’s GPU IP, promising major uplifts in performance and promises of great competitiveness. Since then, other than a slew of internal scandals, we’ve heard very little from the company – until today’s announcement of the new next-generation of IP: the B-Series.

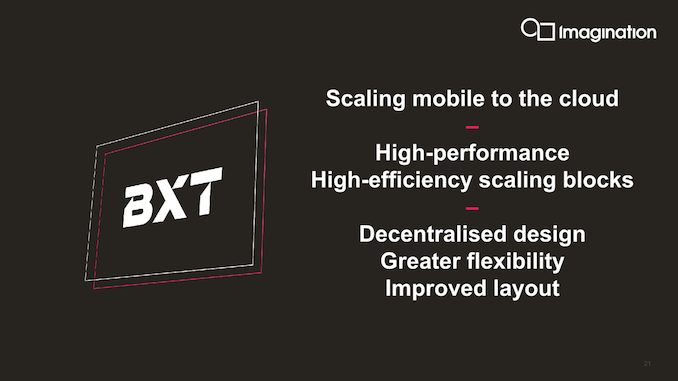

The new Imagination B-Series is an evolution of last year’s A-Series GPU IP release, further iterating through microarchitectural improvements, but most importantly, scaling the architecture up to higher performance levels through a brand-new multi-GPU system, as well as the introduction of a new functional safety class of IP in the form of the BXS series.

The Market Demands Performance: Imagination Delivers it through Multi-GPU

It’s been no secret that the current GPU IP market has been extremely tough on IP providers such as Imagination. Being the only other established IP provider alongside Arm, the company had been seeing an ever-shrinking list of customers due to several factors – one being Arm’s extreme business competitiveness in offering both CPU and GPU IP to customers, and the fact that there’s simply less customers which require licensed GPU IP.

Amongst the current SoC vendors, Qualcomm and their in-house Adreno GPU IP is in a dominant market position, and in recent years had been putting extreme pressure on other vendors – many of these who fall back to Arm’s Mali GPU IP by default. MediaTek had historically been the one SoC vendor who had been using Imagination’s GPUs more often in their designs, however all of the recent Helio of Dimensity products again use Mali GPUs, with seemingly little hope for a SoC win using IMG’s GPU IP.

With Apple using their architectural license from Imagination to design custom GPUs, Samsung betting on AMD’s new aspirations as a GPU IP provider, and HiSilicon both designing their own in-house GPU as well as having an extremely uncertain future, there’s very little left in terms of mobile SoC vendors which might require licensed GPU IP.

What is left are markets outside of mobile, and it’s here that Imagination is trying to refocus: High-performance computing, as well as lucrative niche markets such as automotive which require functional safety features.

Scaling an IP up from mobile to what we would consider high-performance GPUs is a hard task, as this directly impacts many of the architectural balance choices that need to be made when designing a GPU IP that’s actually fit for low-power market such as mobile. Traditionally, this had been always a trade-off between absolute performance, performance scalability and power efficiency – with high performance GPUs simply being not that efficient, while low-power mobile GPUs were unable to scale up in performance.

Imagination’s new B-Series IP solves this conundrum by introducing a new take on an old way of scaling performance: multi-GPU.

Rather than growing and scaling a single GPU up in performance, you simply use multiple GPUs. Now, probably the first thing that will come to user’s minds are parallels to multi-GPU technologies from the desktop space such as SLI or Crossfire, technologies that in recent years have seen dwindling support due to their incompatibility with modern APIs and game engines.

Imagination’s approach to multi-GPU is completely different to past attempts, and the main difference lies in the way workloads are handled by the GPU. Imagination with the B-Series moves away from a “push” workload model – where the GPU driver pushes work to the GPU to render, to a “pull” model, where the GPU decides to pull workloads to process. This is a fundamental paradigm shift in how the GPU is fed work and allows for what Imagination calls a “decentralised design”.

Amongst a group of GPUs, one acts as a “primary” GPU with a controlling firmware processor that divides a workload, say a render frame, into different work tiles that can then the other “slave” GPUs can pull from in order to work on. A tile here is actually the proper sense of the word, as the GPU’s tile-based rendering aspect is central to the mechanism – this isn’t your classical alternate frame rendering (AFR) or split frame rendering (SFR) mechanism. Also, just how a single-GPU tile-based renderer can have varying tile sizes for a given frame, this can also happen in the B-Series’ multi-GPU workload distribution, with varying tile sizes of a single frame being distributed unevenly amongst the GPU group.

The most importantly, this new multi-GPU system that Imagination introduces is completely transparent to the higher-level APIs as well as software workloads, which means that a system running a multi-GPU configuration just sees one single large GPU from a software perspective. This is a big contrast to current discrete multi-GPU implementations, and why Imagination’s multi-GPU technology is a lot more interesting.

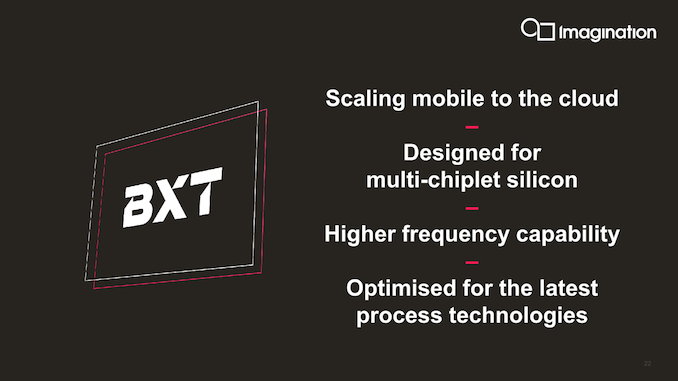

From an implementation standpoint, it allows Imagination and their customers a ton of new flexibility in terms of configuration options. From Imagination’s perspective, instead of having to design one large and fat GPU implementation, which might require more work due to timing closure and other microarchitectural scaling concerns, they can just design a more efficient GPU – and allow customers to simply put down multiple of these in an SoC. Imagination claims that this allows for higher-frequency GPUs, and the company projects implementations around 1.5GHz for high-end use-cases such as for cloud computing usages.

For customers, it’s also a great win in terms of flexibility: Instead of having to wait on Imagination to deliver a GPU implementation that matches their exact performance target, it would be possible for a customer to just take one “sweet-spot” building block implementation and scale the configuration themselves all on their own during the design of their SoC, allowing higher flexibility as well as a smaller turn-around time. Particularly if a customer would be designing multiple SoCs for multiple performance targets, they could achieve this easily with just one hardware design from Imagination.

We’ll get into the details of the scaling in the next page, but currently the B-Series multi-GPU support scales up to 4 GPUs. The other interesting aspect of laying down multiple GPUs on an SoC, in contrast to one larger GPU, is that they do not have to be adjacent or even near each other. As they’re independent design blocks, one could do weird stuff such as putting a GPU in each corner of an SoC design.

The only requirements for the SoC vendor are to have the GPUs connected to the SoC’s standard AXI interconnect to memory – something that’s a requirement anyhow. Vendor might have to scale this up for larger MC (Multi-Core) configurations, but they can make their own choices in terms of design requirements. The other requirement to make this multi-GPU setup work is just a minor connection between the GPUs themselves: this are just a few wires that act as interrupt lines between the cores so that they can synchronise themselves – there’s no actual data traffic happening between the GPUs.

Because of this, this is a design that’s particularly fit for today’s upcoming multi-chiplet silicon designs. Whereas current monolithic GPU designs have trouble being broken up into chiplets in the same way CPUs can be, Imagination’s decentralised multi-GPU approach would have no issues in being implemented across multiple chiplets, and still appear as a single GPU to software.

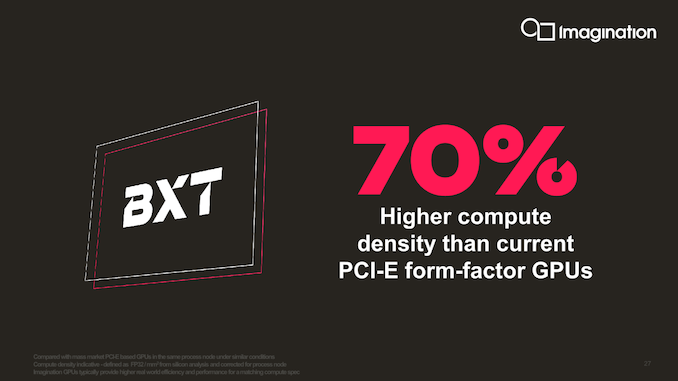

Getting back to the initial point, Imagination is using this new multi-GPU approach to target higher performance designs that previously weren’t available to the company. They note that their more efficient mobile-derived GPU IP through multi-GPU scaling can compete with other current offerings from Nvidia and AMD (Imagination promotes their biggest configuration as reaching up to 6TFLOPs) in PCIe form-factor designs, whilst delivering 70% better compute density – a metric the company defines as TFLOPs/mm². Whilst that metric is relatively meaningless in terms of performance due to the fact that the upper cap on performance is still very much limited by the architecture and the MC4 top scaling limit on the current multi-GPU implementation of the B-Series, it allows for licensees to make for smaller chips that in turn can be extremely cost-effective.

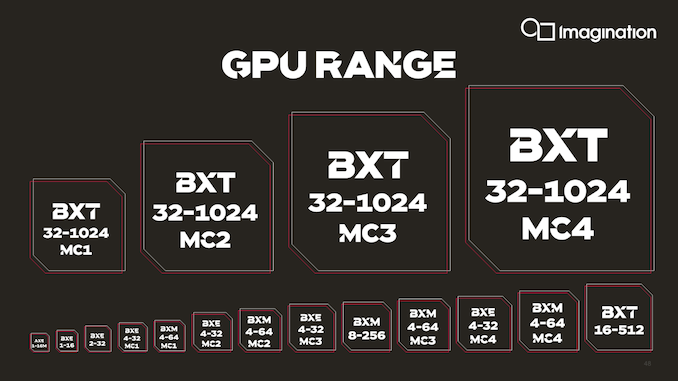

The B-Series covers a slew of actual GPU IP, with the company continuing a segmentation into different performance tiers – the BXT series being the flagship GPU designs, BXM series a more balanced middle-ground GPU IP, and the BXE series being the company’s smallest and most area efficient Vulkan compatible GPU IP. Let’s go over the various GPU implementations in more detail…

74 Comments

View All Comments

myownfriend - Tuesday, October 13, 2020 - link

Yea like if the back buffer were drawn with on-chip memory... like a tile-based GPU.anonomouse - Tuesday, October 13, 2020 - link

Probably works out-ish a bit better with a tile-based deferred renderer, since the active data for a given time will be more localized and more predictable.myownfriend - Tuesday, October 13, 2020 - link

The thing with tile-based GPUs is that they have less data to share between cores since the depth, stencil, and color buffers for each tile are stored on-chip. Since screen-space triangles are split into tiles and one triangle can potentially turn into thousands of fragments, it becomes less bandwidth intensive to distribute work like that. All the work that Imagination in particular has put into HSR to reduce texture bandwidth as well as texture pre-fetch stuff would also benefit them in multi-GPU configurations.SolarBear28 - Tuesday, October 13, 2020 - link

This tech seems very applicable to ARM Macs although Apple is probably using in-house designs.Luke212 - Tuesday, October 13, 2020 - link

why would i want to see 2 gpus as 1 gpu? its a terrible idea. its NUMA x 100myownfriend - Tuesday, October 13, 2020 - link

On an SOC or even in a chiplet design, they wouldn't necessarily have separate memory controllers. We're talking about GPUs as blocks on an SOC.CiccioB - Tuesday, October 13, 2020 - link

It simplify things better than see them as 2 separate GPUsmyownfriend - Sunday, June 6, 2021 - link

I'm gonna be a weirdo and add to something like half a year later. I'm not sure why seeing two or, in this case, four GPUs is preferable to seeing one in situations where all the GPUs are tile-based and on the same chip.Let me think out loud here.

At the vertex processing stage, you could toss triangles at each GPU and they'll transform them to screen-space then clip, project, and cull them. Their respective tiling engines then determine which tiles each triangle is in and appends that to the parameter and geometry buffer in memory. I can't think of many reasons why they would really need to communicate with each other when making this buffer. After that's done, the fragment shading stage would consist of each GPU requesting tiles and textures from memory, shading and blending them in their own tile memory, and writing out the finished pixels in memory. I can't really find much in that example that makes all four GPUs work differently than one larger one.

I can see why that might be preferable with IMR GPUs though. If we were to just toss triangles at each GPU they would transform them to screen-space and clip, project, and cull them just like a TBDR. After this, a single IMR GPU would do an early-z test, if it passes then procedes with the fragment pipeline. This is where the first big issue comes up in a multi-GPU configuration though: overlapping geometry. Each GPU will be transforming different triangles and some of these triangles may overlap. It would be really useful for GPU0 to know if GPU1 is going to write over the pixels it's about to work on. This would require sharing the z-value of the current pixels between both GPUs. They could just compare z-values at the same stages, but unless they were synced with each other, that wouldn't prevent GPU0 from working on pixels that already passed GPU1's early z-test and are about to be written to memory. Obviously, that would result in a lot of unnecessary on-chip traffic, very un-ideal scaling, and possibly pixels being drawn to buffers than shouldn't have.

What might help is to do typical dual-GPU stuff like alternate frame or split-frame rendering so those z-comparisons would only have to happen between the pixels on each chip. The latter raises another problem though. Neither GPU can know what a triangles final screen space coordinates are until AFTER they transform it. This means if GPU0 is supposed to be rendering the top slice of the screen and it gets a triangle from the bottom of the screen or across the divide then it has to know how to deal with that. It could just send that triangle to GPU1 to render. Since they both share the same memory, it has a second option which is to do the z-comparison thing from before and GPU0 could render the pixels to bottom of the screen anyway.

Obviously you could also bin the triangles like TBDR or give each GPU a completely separate task like having one work on the G-buffer while the other creates shadow maps or have each rendering a different program. Because there's so many ways to use two or more IMRs together and each has it's drawbacks, it makes sense to expose them as two separate GPUs. It puts the burden on parrallizing them in someone elses hands. TBDRs don't need to do that because they work more like they normally would. That's why PowerVR Series 5 GPUs pretty much just scaled by putting more full GPUs on the SOC.

Obviously, these both become a lot more complicated when they're chiplets, especially if they have their own memory controllers but I won't get into that.

brucethemoose - Tuesday, October 13, 2020 - link

Andrei, could you ask Innosilicon for one of those PCIe GPUs?Even if it only works for compute workloads, another competitor in the desktop space would be fascinating.

Also, that is a *conspicuously* flashy and desktop-oriented shroud for something thats ostensibly a cloud GPU.

myownfriend - Tuesday, October 13, 2020 - link

I was thinking the same thing about the shroud.